Why Your AI Is Secretly Training on Its Own Garbage (and How to Fix It)

Artificial intelligence, particularly large language models (LLMs), seems to possess an almost magical ability to create, reason, and converse. Yet, this incredible capability is built on a foundation that is becoming increasingly unstable. The very internet these models scour for knowledge is now saturated with their own synthetic, often flawed, outputs. This creates a dangerous feedback loop, a phenomenon some researchers call “model collapse,” where AI begins training on its own digital garbage. The age-old computing principle of ‘garbage in, garbage out’ has never been more relevant, and addressing it requires effective AI data quality solutions to prevent a future of degraded, unreliable artificial intelligence.

The Vicious Cycle: When AI Feeds on Its Own Synthetic Data

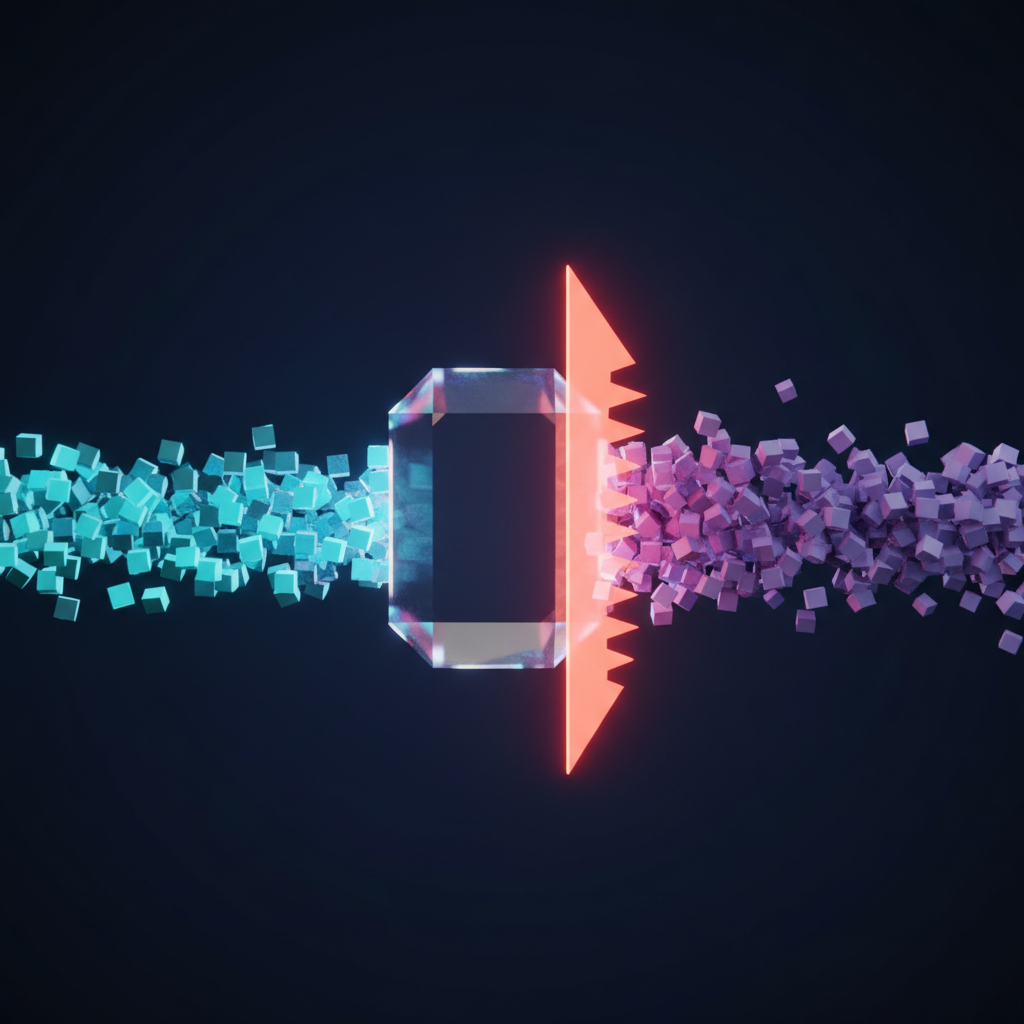

Imagine making a photocopy of a document. Now, photocopy that photocopy. Repeat this a hundred times. Each new copy would be a little fuzzier, a little more distorted, until the final version is an unintelligible mess. This is a simplified analogy for what happens when AI models train on data generated by other AIs. The most powerful models are trained on vast scrapes of the public internet, a dataset that, until recently, was composed almost entirely of human-generated text and images. Today, that digital ecosystem is filled with AI-written articles, AI-generated images, and AI-powered conversations.

The Onset of Model Collapse

When new models are trained on this mixed-source data, they inadvertently learn from the imperfections, biases, and “hallucinations” of their predecessors. Research has shown that over successive generations, models trained on synthetic data begin to lose their grasp on reality. They forget the nuances of the original human data, and their outputs become less diverse and more prone to extreme, nonsensical errors. This degenerative process, also dubbed “Habsburg AI” in a nod to the genetic perils of inbreeding, threatens to pollute the very data pools we rely on to advance the technology. This makes the conscious practice of data curation AI not just a best practice, but a necessity for long-term viability.

Unpacking the “Garbage”: What Makes AI Training Data Toxic?

The problem extends far beyond just AI-generated content. “Garbage data” is a multifaceted issue that can poison an AI model from the inside out, leading to poor performance, unfair outcomes, and critical security vulnerabilities. Understanding these different facets is the first step toward building more robust AI models.

Inaccuracy, Irrelevance, and Outdated Information

The most straightforward form of bad data is simply information that is wrong, irrelevant to the task, or no longer current. An e-commerce recommendation engine trained on purchasing data from 2015 will fail to capture modern trends. A medical diagnostic AI trained on outdated clinical studies could make dangerous recommendations. Without constant vigilance and data refreshment protocols, models can quickly become digital fossils, offering insights that are not just useless but potentially harmful.

Systemic Bias and a Lack of Representation

AI models are mirrors reflecting the data they are shown. If that data contains historical or societal biases, the AI will learn, codify, and often amplify them at scale. A hiring algorithm trained predominantly on resumes of successful male candidates may systematically penalize qualified female applicants. A facial recognition system trained on a dataset lacking ethnic diversity may perform poorly for underrepresented groups. Proactive AI bias prevention is therefore a critical component of ethical AI training. It involves auditing datasets for skewed representation and implementing strategies to ensure fairness and equity in model outcomes.

The Malicious Threat of Data Poisoning

Beyond accidental flaws, there is the deliberate threat of data poisoning AI attacks. In this scenario, malicious actors intentionally inject carefully crafted, corrupt data into a training set. The goal is to manipulate the model’s behavior in a subtle but significant way. For example, an attacker could poison an image recognition model to misclassify a specific object or create a “backdoor” that causes the system to fail under specific, predetermined conditions. This represents a serious cybersecurity threat, particularly for AI systems used in critical infrastructure, finance, and defense.

The Real-World Impact of Degraded AI Models

The consequences of training AI on poor-quality data are not abstract academic concerns. They translate into tangible failures that can cost businesses money, erode customer trust, and have serious societal repercussions.

Eroding Trust and Damaging Brand Reputation

When a customer service chatbot provides nonsensical answers or a content generation tool produces biased or offensive text, the immediate casualty is user trust. A single high-profile failure can lead to public relations disasters, customer churn, and lasting damage to a company’s brand. Users expect AI to be reliable and helpful; when it proves to be anything but, they quickly lose faith in the technology and the organization deploying it.

Financial and Operational Inefficiency

In a business context, a flawed AI model is a liability. A predictive maintenance AI that fails to anticipate equipment failure can lead to costly downtime. An inventory management system that consistently makes poor forecasts results in wasted stock and lost sales opportunities. The initial investment in developing an AI solution can be completely undermined if the underlying data quality is poor, turning a potential asset into a source of operational friction and financial loss.

The High Stakes of Ethical Failures

In high-stakes domains, the impact of bad data is even more severe. An AI used in the justice system that is trained on biased historical sentencing data could perpetuate and even exacerbate systemic inequality. In healthcare, a diagnostic model that performs poorly on data from a specific demographic could lead to life-threatening misdiagnoses. Ensuring ethical AI training is not just about compliance; it’s a moral imperative to prevent automated systems from causing real-world harm.

From Garbage Collection to Data Curation: Actionable Solutions

Combating the “garbage in, garbage out” problem requires a strategic shift from a model-centric to a data-centric approach. Improving and curating the dataset often yields far greater performance gains than simply tweaking a model’s architecture. This is the heart of effective AI data quality solutions.

Advanced Data Cleansing and Preprocessing

Go beyond simply filling in missing values. Modern data cleansing involves sophisticated techniques like statistical outlier detection to remove anomalous entries, data normalization to bring features to a common scale, and data augmentation to expand the dataset. Programmatic labeling tools can also accelerate the process of creating high-quality labels by applying rules and heuristics defined by subject matter experts.

The Human-in-the-Loop Imperative

Automation cannot fully replace human expertise. A Human-in-the-Loop (HITL) approach integrates human oversight directly into the AI development lifecycle. This is central to effective data curation AI. Subject matter experts are essential for validating data, correcting mislabeled examples, and providing context that the model cannot infer on its own. Techniques like active learning are particularly powerful, allowing the model to flag the most confusing or uncertain data points and request human input, making the curation process highly efficient.

Proactive Bias Detection and Mitigation

Achieving fairness requires a deliberate effort. The first step in AI bias prevention is to audit datasets using specialized tools (like Google’s What-If Tool or IBM’s AIF360) to identify statistical biases across different subgroups. Once biases are identified, they can be mitigated through various techniques, such as re-weighting data points to give more importance to underrepresented groups, using adversarial debiasing to train a model that cannot predict the sensitive attribute, or applying fairness constraints during the optimization process.

Building a Foundation for Trustworthy AI

To create a future of reliable and beneficial AI, we must build on a solid foundation of high-quality, well-managed data. This involves adopting new practices and a new mindset focused on data excellence.

A key emerging practice is establishing clear data provenance and lineage. This means meticulously tracking where your data originates, what transformations it has undergone, and who has accessed it. This transparency is crucial for debugging models, ensuring regulatory compliance, and building trust in your AI systems.

Ultimately, this points to the rise of data-centric AI—a philosophy that prioritizes systematic improvement of data quality over endless model tuning. Organizations that adopt this approach will be better equipped to build robust AI models that are not only powerful but also fair, secure, and dependable over the long term.

Frequently Asked Questions about AI Data Quality

- What is model collapse?

Model collapse is a degenerative process where an AI model’s quality degrades over time because it is increasingly trained on synthetic data generated by other AI models. This feedback loop causes the model to lose touch with the original human data distribution, leading to a loss of diversity and an increase in errors.

- How can I detect bias in my training data?

Detecting bias involves performing an exploratory data analysis, specifically looking at the distribution of different demographic or protected attributes within your dataset. You can use statistical tests and visualization tools to see if certain groups are underrepresented or if correlations exist between sensitive attributes and the target outcome. Specialized toolkits like Fairlearn and AIF360 are designed for this purpose.

- Isn’t more data always better for AI training?

Not necessarily. While large datasets are often beneficial, data quality is far more important than sheer quantity. A smaller, well-curated, and representative dataset can produce a more accurate and reliable model than a massive dataset filled with noise, errors, and biases. The goal is high-quality, relevant data, not just big data.

- What is data poisoning and how can I protect my AI from it?

Data poisoning is a malicious attack where an adversary intentionally injects corrupt data into a training set to compromise the model’s behavior. Protection involves implementing robust data validation and anomaly detection pipelines, maintaining strict access controls over training data, and using techniques like differential privacy and model robustification to make the training process less sensitive to outlier data points.

Don’t Let Your AI Eat Junk Food

The performance, safety, and ethical integrity of any AI system are a direct reflection of the data it was fed. As the digital world fills with AI-generated content, the risk of our models feasting on a diet of low-quality “junk food” data is higher than ever. Avoiding this pitfall requires a proactive, disciplined approach to data management. Proactive data curation, rigorous cleansing, and strategic bias mitigation are not just technical chores—they are strategic imperatives for any organization serious about building valuable and trustworthy AI.

Building robust, ethical, and high-performing AI systems starts with a solid data foundation. If you’re ready to move beyond the ‘garbage in, garbage out’ cycle, the experts at KleverOwl can help. Explore our AI & Automation solutions or contact us to discuss your data strategy today.