Breaking the Chains: Why AI Hardware and Compiler Agnosticism is the Future

The incredible progress in artificial intelligence is deeply intertwined with the development of specialized AI hardware. For over a decade, one ecosystem has overwhelmingly dominated this space: NVIDIA’s GPUs, powered by their CUDA programming model. This combination enabled the deep learning explosion, but it also forged a set of golden handcuffs. Developers built a world of AI models on a foundation that presupposed a specific brand of hardware. As the AI field matures, this dependency is becoming a critical liability. The strategic shift towards AI hardware and compiler agnosticism is no longer a niche academic concept; it’s a necessary evolution for building scalable, cost-effective, and future-proof AI systems. This approach promises to free developers from vendor lock-in, allowing them to choose the best tool for the job, regardless of the silicon it runs on.

The CUDA Moat: A Powerful but Enclosing Fortress

To understand the move towards agnosticism, we first need to appreciate the moat NVIDIA built with CUDA (Compute Unified Device Architecture). When it was introduced, it was a game-changer. It gave developers a C-like language and a set of powerful libraries to unlock the massive parallel processing capabilities of GPUs for general-purpose tasks, a practice now known as GPU computing.

The Foundation of the AI Boom

CUDA’s success wasn’t accidental. NVIDIA invested heavily in creating a robust and mature ecosystem that became the bedrock of the deep learning revolution. Key components included:

- cuDNN (CUDA Deep Neural Network library): A GPU-accelerated library of primitives for deep neural networks. It provided highly optimized implementations for standard routines like convolutions, pooling, and activation functions, saving developers immense amounts of time.

- cuBLAS (CUDA Basic Linear Algebra Subroutines): An accelerated library for matrix and vector operations, which are the fundamental mathematical building blocks of most AI models.

- A Mature Toolchain: Comprehensive debuggers, profilers, and extensive documentation gave developers the tools they needed to build and optimize complex applications effectively.

This powerful ecosystem meant that for years, if you were serious about AI model training, you were serious about NVIDIA. The performance was unmatched, and the developer experience was significantly smoother than any alternative.

The High Walls of a Walled Garden

However, this dominance created a classic case of vendor lock-in. Code written in CUDA can only run on NVIDIA GPUs. AI frameworks, research papers, and open-source models were overwhelmingly built and tested within this ecosystem. This created several strategic challenges for businesses and developers:

- Lack of Choice: You couldn’t easily switch to a competitor’s hardware, even if it offered a better price-to-performance ratio for your specific workload.

- Pricing Power: With limited competition, the dominant player can dictate market prices, leading to higher costs for AI infrastructure.

- Supply Chain Risk: Relying on a single supplier for critical hardware creates a significant business risk, as seen during recent chip shortages.

- Stifled Innovation: While NVIDIA innovates rapidly, a single-vendor market can slow down the broader pace of architectural diversity and experimentation in the hardware space.

The Rise of Contenders: A Diversifying Hardware Field

The market’s dependency on a single vendor couldn’t last forever. The enormous demand for AI compute has spurred a wave of innovation, creating a much richer and more diverse field of AI hardware options.

AMD GPUs and the ROCm Challenge

NVIDIA’s most direct competitor in the GPU space, AMD, has been steadily building its alternative software ecosystem. AMD GPUs offer compelling hardware performance, often at a competitive price point. The key to unlocking this performance is ROCm (Radeon Open Compute Platform), AMD’s open-source software stack for GPU computing.

ROCm is designed to be a direct alternative to CUDA. It includes its own set of libraries, like rocBLAS and MIOpen, that mirror the functionality of their CUDA counterparts. Tools like the HIP (Heterogeneous-compute Interface for Portability) library even help developers convert existing CUDA code to run on both NVIDIA and AMD hardware with minimal changes. While ROCm has historically lagged behind CUDA in maturity and library support, recent investments and broader industry adoption by major frameworks like PyTorch and TensorFlow are rapidly closing the gap, making AMD GPUs a viable and attractive option for many AI workloads.

A New Wave of AI Accelerators

The innovation extends far beyond traditional GPUs. A new class of specialized processors, often called AI accelerators, has emerged, each designed to excel at specific AI tasks:

- Google’s Tensor Processing Units (TPUs): Custom ASICs (Application-Specific Integrated Circuits) designed from the ground up to accelerate the matrix operations at the heart of neural networks. They are particularly efficient for large-scale training and inference.

- Neural Processing Units (NPUs): These are specialized, low-power processors integrated into everything from smartphones to IoT devices. They are designed to run AI inference tasks efficiently on the edge, without needing to connect to a powerful cloud server.

- Custom Silicon from Startups: Companies like Groq, Cerebras, and SambaNova are building entirely new architectures (LPUs, Wafer-Scale Engines) that rethink how AI computation is performed, promising massive performance gains for specific types of models.

This explosion of hardware diversity is exciting, but it also amplifies the problem of software fragmentation. A model optimized for a GPU won’t run efficiently on a TPU or an LPU without significant modification. This is where compiler agnosticism becomes essential.

The Software Bridge: Compilers and Intermediate Representations

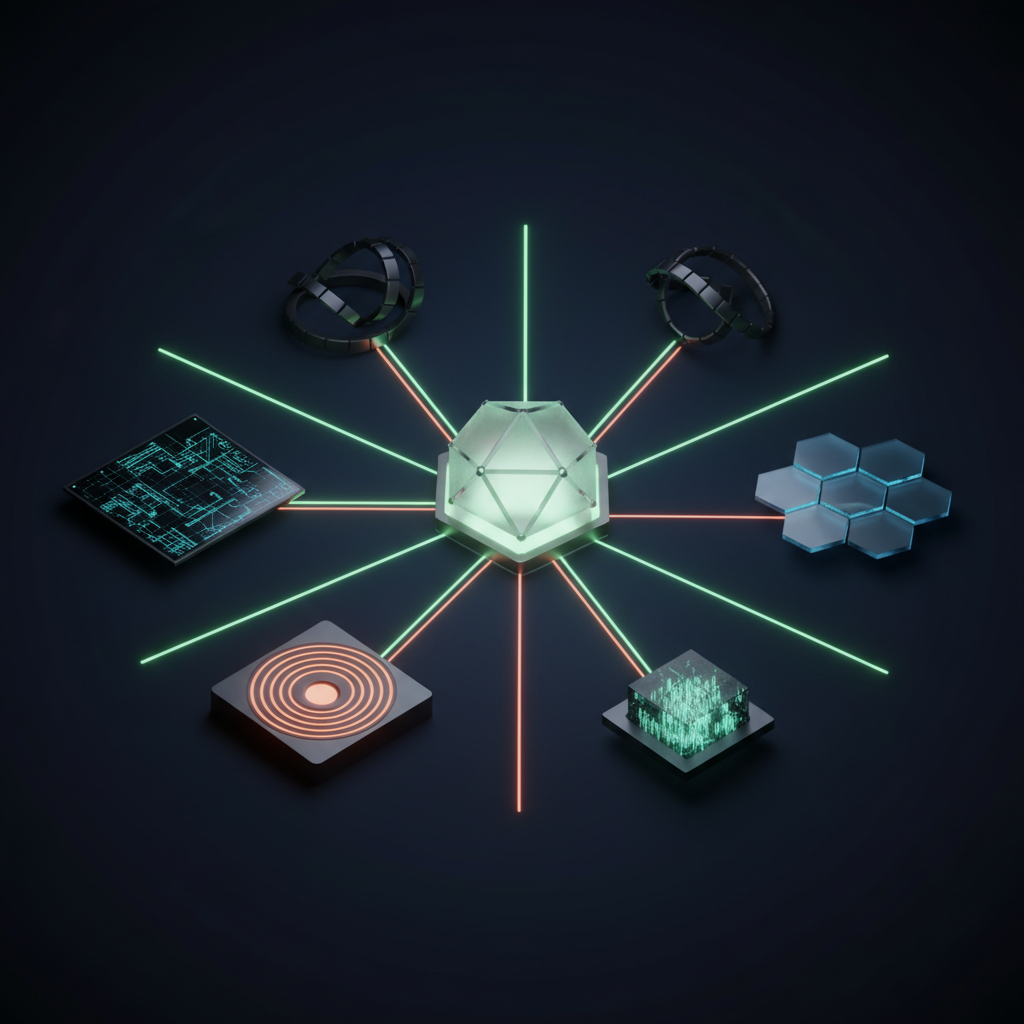

If hardware is becoming more diverse, the software layer must become more abstract and universal. Instead of writing code for a specific chip, developers need to write code that can be efficiently translated to run on any chip. This is the job of modern AI compilers and a concept called an Intermediate Representation (IR).

What is an Intermediate Representation (IR)?

Think of an IR as a universal language for AI models. When a developer builds a model in a high-level framework like PyTorch or TensorFlow, it first gets translated into this common IR. From this universal format, specialized compilers can then generate highly optimized, low-level machine code for any number of target hardware architectures—NVIDIA GPUs, AMD GPUs, Google TPUs, or custom NPUs.

This “translate-once, run-anywhere” approach is far more efficient than creating and maintaining separate codebases for each piece of hardware. It decouples the AI model’s logic from the physical silicon it will run on.

Key Technologies Enabling Agnosticism

Several key projects are making this vision a reality:

- MLIR (Multi-Level Intermediate Representation): An open-source compiler infrastructure project from the LLVM family. MLIR provides a flexible and extensible framework for creating IRs. It allows hardware makers to create their own “dialects” within MLIR, making it easier to represent their unique architectural features and build compilers that can target them effectively.

- OpenXLA (Open eXpress Lane Acceleration): Building on MLIR, OpenXLA is an open-source compiler project that aims to connect major ML frameworks (like TensorFlow, PyTorch, and JAX) to a wide variety of AI hardware. It acts as the bridge, taking a model from the framework, translating it through its IR, and compiling it for a specific backend, whether it’s a CPU, GPU, or TPU.

- ONNX (Open Neural Network Exchange): While not a compiler, ONNX is a related and important standard. It’s an open format for representing AI models. You can train a model in one framework (e.g., PyTorch), export it to the ONNX format, and then use an ONNX-compatible runtime to run it on completely different hardware or in a different environment.

The Practical Benefits of a Hardware-Agnostic Strategy

Adopting a hardware-agnostic approach isn’t just an elegant engineering solution; it delivers tangible business and operational advantages.

Future-Proofing Your AI Investments

By building your AI software on an abstraction layer like OpenXLA, you are no longer betting on a single hardware vendor. When a new, more powerful, or more cost-effective accelerator hits the market, you can adopt it without having to re-architect your entire AI stack. Your high-level model code remains the same; you simply target a new compiler backend. This agility is a powerful competitive advantage.

Optimizing for Cost and Performance

Not all AI tasks are created equal. Model training is computationally intense and benefits from the most powerful hardware available. Model inference, on the other hand, might need to run on millions of low-power devices at the edge. A hardware-agnostic strategy enables “heterogeneous computing,” where you can seamlessly deploy the same core model to the best-suited hardware for each task. You can train on high-end NVIDIA or AMD GPUs in the cloud and then compile the same model to run efficiently on an NPU in an Android device.

Fostering a Healthier, More Competitive Market

When software is no longer tied to specific hardware, hardware vendors must compete on the merits of their silicon: performance, power efficiency, and price. This levels the playing field, encouraging more companies to enter the AI hardware market. The resulting competition benefits everyone, driving down costs and accelerating the pace of innovation across the entire industry.

Challenges on the Path to True Agnosticism

While the direction is clear, the transition to a fully hardware-agnostic world is not without its challenges. It’s important to approach this shift with a realistic perspective.

First, abstraction can come with a performance cost. A compiler generating code for generic hardware might not achieve the same level of hand-tuned performance as an expert writing native CUDA code for a specific NVIDIA GPU. While compilers are getting remarkably good, closing this “performance gap” remains an active area of development.

Second, the ecosystem for these new tools is still maturing. The decades of community knowledge, tutorials, and debugging tools built around the CUDA ecosystem are a formidable advantage. The documentation, community support, and tooling for newer frameworks like OpenXLA are growing rapidly but are not yet as comprehensive.

Finally, there is a learning curve. Developers and ML engineers who are deeply familiar with one specific stack will need to invest time in understanding these new layers of abstraction, from high-level frameworks down to the compiler IR, to effectively debug and optimize their models.

Frequently Asked Questions

What is hardware agnosticism in AI?

AI hardware agnosticism is a development approach where AI software, such as neural network models, is designed to be independent of the specific underlying hardware it runs on. This is achieved using abstraction layers like compilers and intermediate representations, allowing the same model to be executed efficiently on different types of processors, like GPUs from various vendors, TPUs, or NPUs.

Is CUDA still relevant in a hardware-agnostic world?

Absolutely. CUDA and NVIDIA GPUs will remain a critical part of the AI ecosystem for the foreseeable future. They represent the high-performance benchmark that other systems are measured against. In a hardware-agnostic world, CUDA simply becomes one of many important compiler targets, rather than the only viable option. Its mature libraries and tools will continue to be valuable for performance-critical applications.

Can I run my PyTorch model on AMD GPUs today?

Yes. PyTorch has robust, native support for AMD GPUs through the ROCm software stack. For developers, the process is becoming increasingly seamless, often requiring minor changes to the code to specify the target device. The ongoing improvements in ROCm and framework integration are making AMD hardware a very competitive platform for both training and inference.

How does compiler agnosticism help with AI on edge devices?

It’s critically important for the edge. The “edge” consists of a vast and fragmented ecosystem of low-power processors (NPUs, DSPs, microcontrollers) from dozens of manufacturers. A compiler-centric, agnostic approach allows a company to design a single AI model and then use the compiler to generate optimized code for each specific edge device in their product line, dramatically reducing development and maintenance overhead.

Conclusion: Building a More Open and Flexible AI Future

The era of AI development being synonymous with a single hardware platform is drawing to a close. The tight coupling between software and silicon that kickstarted the deep learning revolution is now a bottleneck to future growth and innovation. The path forward is through abstraction and choice.

AI hardware and compiler agnosticism, powered by sophisticated technologies like MLIR and OpenXLA, represents a fundamental shift. It empowers developers and businesses to break free from vendor lock-in, optimize their workloads across a diverse range of hardware, and build AI systems that are more resilient, cost-effective, and adaptable to the innovations of tomorrow. This transition isn’t about picking a new winner; it’s about building a system where everyone can win.

Navigating this evolving technological terrain requires expertise. If you’re ready to build a more flexible and powerful AI strategy for your business, KleverOwl is here to help. Our experts can guide you in designing and implementing hardware-agnostic solutions that are built for the future. Explore our AI & Automation services or contact us today for a consultation.