The Death of the “Everything Prompt”: Why Google’s Move Toward Structured AI is the Future

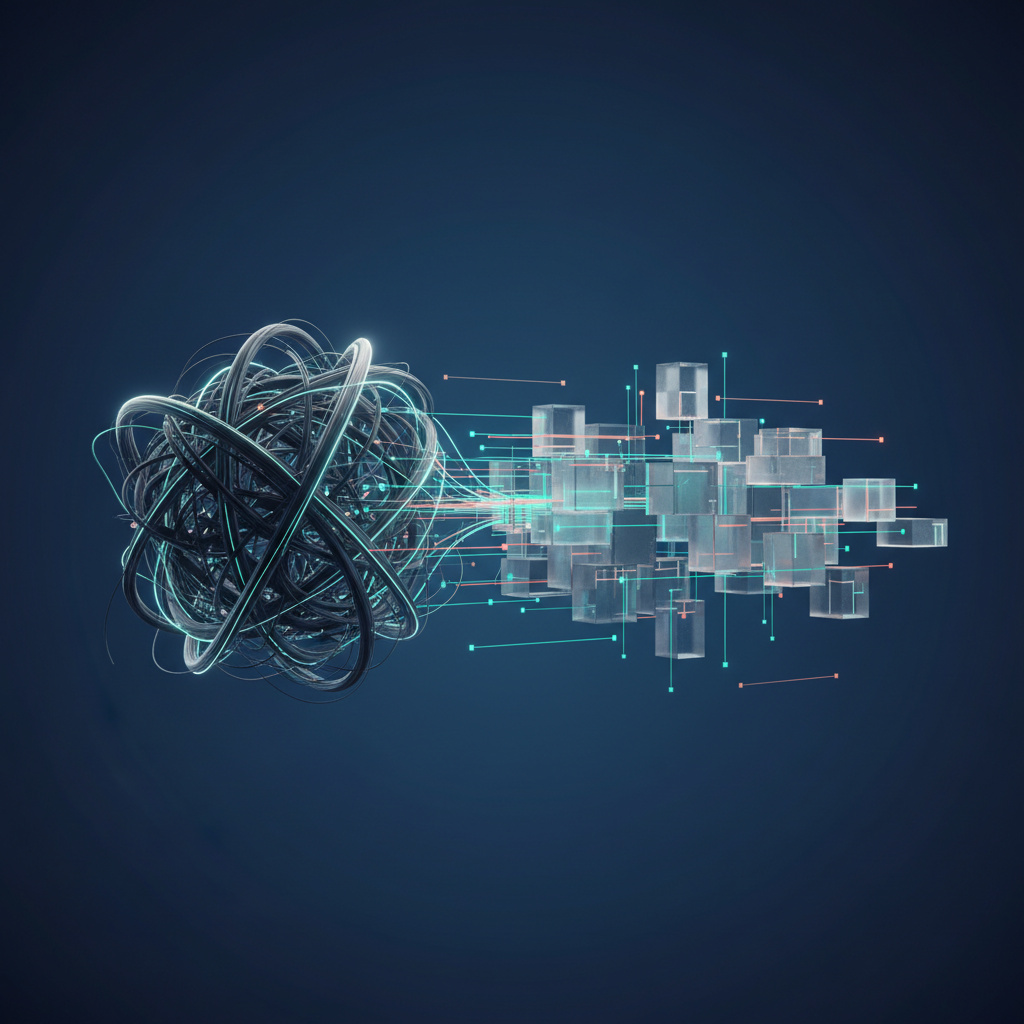

For the last couple of years, the generative AI world has been obsessed with a single, almost mythical, concept: the “everything prompt.” We’ve all seen them—long, convoluted blocks of text meticulously crafted to coax a Large Language Model (LLM) into performing a complex task in one go. It felt like modern-day alchemy, mixing instructions, context, and examples to spin text into gold. But that era is rapidly drawing to a close. The industry, led by giants like Google, is signaling a major pivot away from this brute-force approach towards a far more robust and sophisticated paradigm. This is the dawn of the structured AI development strategy, a move from commanding a single, all-knowing oracle to orchestrating a team of specialized, tool-wielding experts.

Beyond the Monolith: Why the “Everything Prompt” Was a Dead End

The “everything prompt” was a necessary first step in our journey with generative AI. It taught us the fundamentals of communicating with LLMs. The idea was simple: if you want a complex output, you need to provide all the necessary information upfront. This meant cramming persona instructions, formatting rules, extensive background context, few-shot examples, and the final query into one massive request.

While clever, this approach was fundamentally flawed for building real-world, scalable applications. Its limitations quickly became apparent:

- Brittleness: An “everything prompt” is like a house of cards. A small, seemingly insignificant change in wording could cause the entire output to collapse into nonsense or ignore a critical instruction.

- Poor Scalability: How do you maintain or debug a 2,000-word prompt? As business logic evolves, updating these monolithic prompts becomes a nightmare of trial and error, with no version control or modularity.

- Limited Capability: These prompts fail spectacularly at tasks requiring multiple steps, external knowledge, or interaction with other systems. An LLM can’t check real-time inventory, book a flight, or query a live database from within a single, static prompt.

- Context Window Constraints: As prompts grow, they hit the context window limits of the model. This not only increases costs but also dilutes the model’s focus, often leading to more frequent hallucinations as it struggles to weigh all the information provided.

This approach treated the LLM like a black box to be tricked into compliance. The future, however, lies in building transparent, multi-component systems where the LLM is a powerful reasoning engine at the core, not the entire machine.

What is Structured AI? Deconstructing the New Paradigm

Structured AI is a shift in mindset from prompt engineering to systems engineering. It’s about designing and building applications as a collection of interconnected, specialized components that collaborate to achieve a complex goal. Instead of one giant prompt, you create a workflow where different AI and non-AI components are called upon as needed. The Google AI strategy, particularly with its recent announcements, provides a perfect blueprint for this new model. Let’s break down its core pillars.

AI Agent Frameworks and Multi-Agent Systems

At the heart of structured AI is the concept of an agent. An AI agent is more than just a chatbot; it’s an autonomous system that perceives its environment, makes decisions, and takes actions to achieve a specific goal. Think of it as a digital worker with a specific job description. You might have one agent whose sole job is to search your internal knowledge base, another that summarizes technical documents, and a third that drafts customer emails. These agents can then be orchestrated in a multi-agent system, passing tasks to one another to solve a problem that would be impossible for a single agent to handle. This modular approach is the foundation of modern AI agent frameworks like LangChain and LlamaIndex.

Retrieval-Augmented Generation (RAG)

One of the biggest weaknesses of LLMs is that their knowledge is frozen at the time of their training and they are prone to making things up (hallucinating). Retrieval-Augmented Generation (RAG) directly addresses this. RAG is a technique where the AI system first retrieves relevant, up-to-date information from an external knowledge source (like a company’s document repository, a product database, or the live internet) before generating a response. This grounds the LLM’s output in factual, current data, dramatically increasing accuracy and trustworthiness. For example, a customer service bot using RAG can pull a user’s specific order history from a database to provide a precise, accurate update.

Tool Use and Function Calling

This is where structured AI truly comes to life. Modern LLMs like Google’s Gemini and OpenAI’s GPT-4 have the ability to use external “tools.” These tools are essentially APIs that connect the AI to other software or data sources. The AI model itself can intelligently decide when it needs to use a tool, what information to send to it, and how to use the tool’s output in its final response. A travel planning agent, for instance, could be given tools to access a flight booking API, a weather API, and a hotel reservation system. The user simply says, “Book me a trip to London next week,” and the agent autonomously uses these tools in the correct sequence to fulfill the request.

Specialized and Fine-Tuned Models

The “one model to rule them all” idea is fading. A structured AI approach recognizes that it’s often more efficient and effective to use a mix of models. You might use a powerful, large model like Gemini Ultra for complex reasoning and planning, but then route simpler tasks—like sentiment analysis or data extraction—to smaller, faster, and cheaper models that have been fine-tuned for that specific job. This “mixture of experts” approach optimizes for both cost and performance, using the right tool for the right job.

Google’s Vision: A Case Study in Structured AI

Google’s recent I/O conference was a masterclass in its structured AI vision. Instead of just announcing a bigger, better chatbot, they showcased an entire ecosystem of interconnected components designed for building sophisticated AI systems.

Project Astra is the flagship example. It wasn’t presented as a simple LLM; it’s a “universal AI agent.” It can see the world through a camera, understand spoken language, remember what it has seen before (persistent memory), and take actions. This is a multi-modal agent that seamlessly integrates different AI capabilities into a cohesive whole.

The Gemini family of models (Ultra, Pro, and Nano) embodies the specialized model approach. Google isn’t pushing one model for everything. They offer a range of options, from the most powerful data center models to lightweight on-device models, allowing developers to choose the right balance of performance and efficiency for their application.

Finally, tools like Vertex AI Agent Builder provide the practical infrastructure for this vision. This platform is explicitly designed to help developers build and deploy agents that are grounded in their enterprise data (RAG) and integrated with their existing applications and APIs (tool use). This is a clear signal that the Google AI strategy is focused on enabling developers to build structured, agent-based systems, not just write better prompts.

The Evolution of the Developer: From Prompt Engineer to AI Orchestrator

This paradigm shift has profound implications for developers and engineers. The role of the “prompt engineer” is not disappearing, but it is being absorbed into a much larger and more technical discipline. The future of prompt engineering is less about creative writing and more about system design.

The new role is that of an AI Orchestrator or AI Systems Architect. This person’s job isn’t to write one perfect prompt but to design the entire AI-powered workflow. Their responsibilities include:

- System Design: Decomposing a business problem into a series of steps that can be handled by different agents, tools, or models.

– Model Selection: Choosing the right LLM or combination of models for each task in the workflow, balancing cost, latency, and capability.

– Data Integration: Building the RAG pipeline to ensure agents have access to timely and accurate information.

– Tool Development: Creating and securing the APIs that agents will use to interact with the outside world.

– State Management: Designing how the system will maintain memory and context across a multi-step conversation or task.

This is a true software engineering discipline that requires a deep understanding of system architecture, data structures, and API design, transforming AI development from a niche art into a core engineering practice.

Benefits and Challenges of a Structured AI Strategy

Adopting a structured approach offers immense advantages for businesses looking to build meaningful AI solutions, but it also comes with a new set of challenges.

The Upside: Why Your Business Should Make the Shift

- Reliability and Predictability: By breaking down tasks and grounding responses in real data, structured systems are far less prone to hallucination and produce more consistent, trustworthy results.

- Scalability and Maintainability: A modular, agent-based architecture is infinitely easier to debug, update, and scale than a monolithic prompt. You can upgrade the “billing inquiry” agent without affecting the “technical support” agent.

- Enhanced Capabilities: These systems can perform complex, multi-step tasks in the real world—automating workflows, managing logistics, and interacting with other software—that are simply impossible with a single prompt call.

- Efficiency: Using smaller, specialized models for routine tasks can significantly reduce operational costs and improve response times compared to relying exclusively on a large, expensive model.

The Hurdles: What to Watch Out For

- Increased Complexity: Designing, building, and debugging a multi-agent system is inherently more complex than writing a prompt. It requires a dedicated engineering effort.

- Latency: Each step in the chain—a RAG lookup, a tool call, a model-to-model handoff—adds latency. Optimizing these systems for speed is a critical challenge.

- Security: Granting an AI agent the ability to use tools like “send email” or “update database” introduces new security vectors. Robust authentication, authorization, and validation are essential to prevent misuse.

- Observability: When a task fails, tracing the point of failure across a complex chain of agents and API calls can be difficult without proper logging and monitoring tools.

Frequently Asked Questions (FAQ)

Is prompt engineering dead?

Not at all, but it is evolving. Instead of crafting one master “everything prompt,” the focus is now on writing clear, concise, and effective prompts for each individual agent or tool call within the larger system. It’s becoming more targeted and technical—a component of system design rather than the entire process.

What is an AI agent framework?

An AI agent framework is a library or toolkit (like LangChain, LlamaIndex, or Microsoft’s Autogen) that provides developers with pre-built components and structures to simplify the creation of structured AI systems. They handle things like agent logic, memory, tool integration, and chaining, allowing developers to focus on the business problem rather than the underlying plumbing.

How is this different from just chaining API calls in a traditional script?

The key difference is the LLM’s autonomous reasoning and planning ability. In a traditional script, the developer hard-codes the exact sequence of API calls. In an agent-based system, the developer provides the agent with a goal and a set of available tools, and the LLM itself determines the best sequence of actions and tool uses to achieve that goal, adapting its plan as it receives new information.

Can a small business implement a structured AI strategy?

Absolutely. The rise of powerful open-source models and accessible agent frameworks means that building sophisticated AI agents is no longer the exclusive domain of tech giants. Small and medium-sized businesses can build custom agents to automate internal workflows, enhance customer support, and create powerful new product features.

Conclusion: From Prompting to Engineering

The shift away from the “everything prompt” is not just an incremental improvement; it’s a fundamental change in how we build with AI. It marks the maturation of the field from experimental tinkering to disciplined engineering. A structured AI development strategy is the only sustainable path toward creating AI applications that are reliable, scalable, and genuinely useful in solving complex, real-world problems.

This new era demands a new set of skills, blending creative problem-solving with rigorous system design. It’s an exciting time for developers and businesses alike, opening up possibilities that were unimaginable just a few years ago. The future isn’t about finding the magic words; it’s about building the right machine.

Whether you’re looking to build a custom AI agent to streamline your operations, develop a new data-driven web application, or need guidance on designing a secure and scalable AI architecture, the principles of structured AI are key. At KleverOwl, we specialize in transforming complex challenges into elegant solutions. Explore our AI & Automation services or contact us to discuss how we can build the future of your business, together.