The Black Box Problem: Why AI-Generated Code Stops Being Maintainable

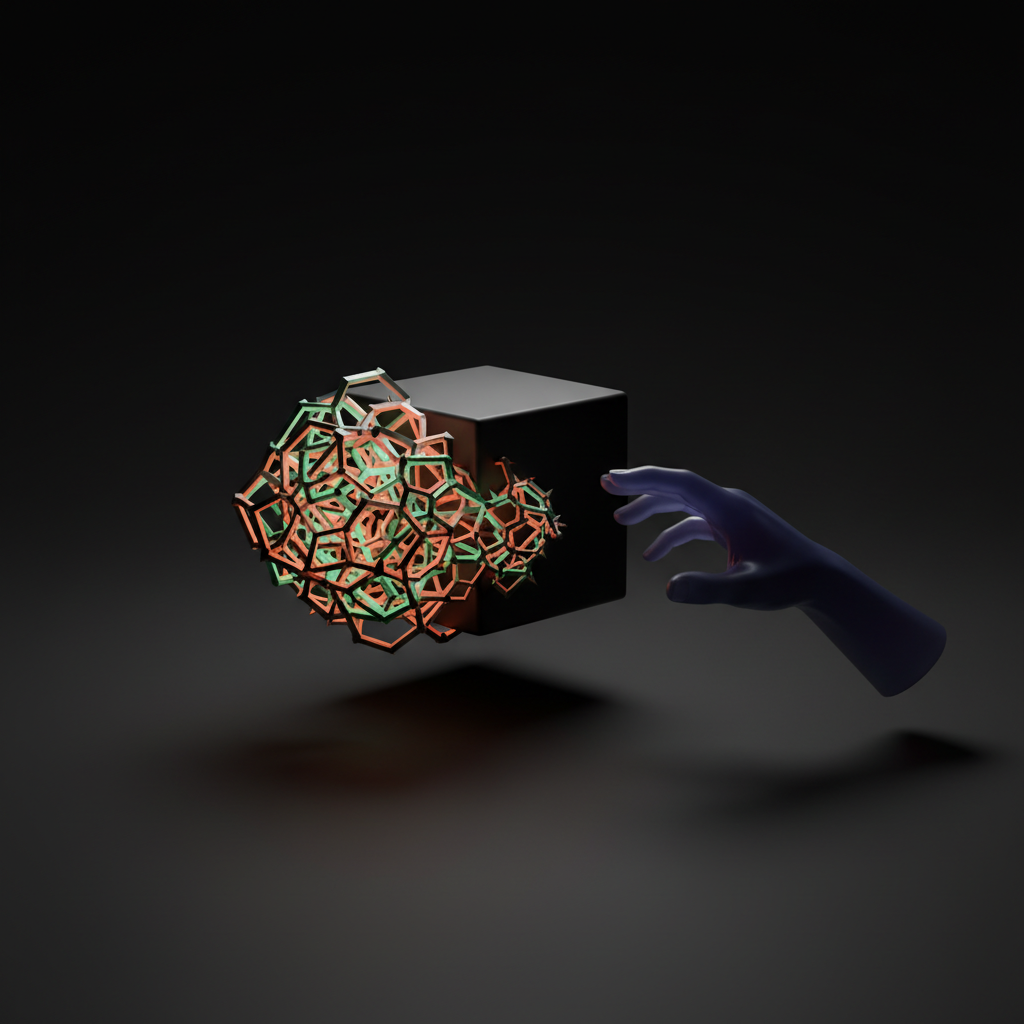

An AI coding assistant generates a complex algorithm in seconds, solving a problem that would have taken a human developer hours. It passes all the initial tests, and with a click, it’s merged into the main branch. The team celebrates the productivity boost. Fast forward six months: a critical bug emerges in production, and it’s traced back to that very same block of code. Now, a different developer is staring at it, completely baffled. The code works, but no one on the team understands why it works the way it does. This scenario highlights a growing crisis in software development: the challenge of AI generated code maintainability. As we increasingly rely on AI to write our software, we are introducing “black boxes” into our codebases—pieces of logic that are functionally correct but intellectually opaque, creating a ticking time bomb for long-term project health.

Understanding the ‘Black Box’ in AI-Generated Code

In the context of artificial intelligence, a “black box” refers to a system where the internal workings are hidden or incomprehensible to an observer. You provide an input, you receive an output, but the process in between is a mystery. When applied to AI code generation tools like GitHub Copilot or Amazon CodeWhisperer, this concept takes on a practical and often problematic meaning for engineering teams.

These large language models (LLMs) are not sentient beings that “understand” programming paradigms or architectural principles. They are incredibly sophisticated pattern-matching machines. Trained on billions of lines of public code from sources like GitHub, they learn to predict the most statistically probable sequence of code tokens based on the context you provide (your existing code, comments, and prompts).

The result is often code that is:

- Syntactically Correct: The code compiles and runs without errors for the common use case.

- Functionally Plausible: It appears to solve the immediate problem at hand.

- Logically Opaque: The underlying reasoning—the “why” behind a specific choice of algorithm, data structure, or control flow—is completely absent.

The AI isn’t engineering a solution; it’s mimicking solutions it has seen before. This distinction is critical. A human engineer makes conscious trade-offs regarding performance, readability, and future flexibility. An AI model simply provides the most likely answer, which might be clever and efficient, or it might be a convoluted, brittle piece of logic that only works by coincidence for the specific inputs it was prompted with. This is the essence of the black box AI problem in software development.

The Hidden Costs: Key Maintainability Challenges

While the immediate productivity gains are tempting, the long-term costs associated with poor AI generated code maintainability can quickly negate those benefits. Integrating these black boxes into a living, breathing codebase introduces several specific challenges that engineering teams must confront.

Lack of Intent and Rationale

The most significant issue is the absence of intent. Human-written code, at its best, tells a story. Variable names, function structures, and comments work together to explain not just what the code does, but why it was designed that way. AI-generated code offers no such narrative. It might produce a highly optimized function using bitwise operations, but it won’t explain why that approach was chosen over a more readable, straightforward one. When a future developer needs to modify or debug this code, they must first spend an inordinate amount of time reverse-engineering its purpose, a process fraught with risk and inefficiency.

Inconsistent Quality, Style, and Patterns

An AI model’s training data is a chaotic mix of coding styles, standards, and paradigms from millions of different projects. Consequently, the code it generates can be wildly inconsistent. In a single session, it might produce a clean, functional-style snippet followed by a verbose, object-oriented class that completely contradicts the project’s established architectural patterns. This stylistic whiplash pollutes the codebase, making it harder to read and reason about. It erodes the team’s carefully constructed coding standards, leading to a “broken windows” effect where code quality degrades over time.

Subtle Bugs and Edge Case Blindness

One of the biggest risks with AI code quality is its inherent weakness with edge cases. The model generates code that works for the “happy path” because that’s what’s most common in its training data. However, it often fails to account for null inputs, empty arrays, concurrency issues, or other boundary conditions that a seasoned developer would instinctively check. These subtle bugs can lie dormant for months, only surfacing under specific production loads or with unexpected user inputs. Because the developer who accepted the code didn’t go through the mental exercise of considering these edge cases, they are often missed during the initial review process.

Dependency on Obscure or Deprecated Practices

An LLM’s knowledge is frozen at the point its training data was collected. This means it can confidently recommend using libraries that are now unmaintained, deprecated functions that have known security vulnerabilities, or anti-patterns that the community has since moved away from. A junior developer, trusting the AI’s suggestion, might inadvertently introduce significant technical debt or security risks into the project. Without a senior developer’s oversight to catch these anachronisms, the codebase’s health can quickly deteriorate.

The Human Factor: How AI Impacts Team Dynamics and Skills

The integration of AI into the software development lifecycle doesn’t just affect the code; it fundamentally changes how developers work, learn, and collaborate. While the promise of enhanced developer productivity is real, it comes with cultural and professional challenges that cannot be ignored.

The Risk of Deskilling and Intellectual Stagnation

For junior and mid-level developers, a significant part of their growth comes from struggling with a problem, researching different solutions, and understanding the underlying principles. Over-reliance on AI assistants can short-circuit this crucial learning process. Instead of learning how to design an algorithm, they learn how to write a prompt to generate one. This can lead to a generation of developers who are proficient at using tools but lack a deep, foundational understanding of computer science and software engineering principles. Their problem-solving “muscles” may atrophy, making them less capable of tackling novel or truly complex challenges where AI cannot provide a ready-made solution.

The Ownership Vacuum: Who Is Responsible for AI’s Mistakes?

When a bug is found in human-written code, the line of responsibility is clear. But what happens when the faulty code was generated by an AI? The developer who accepted the suggestion is technically responsible, but a dangerous diffusion of accountability can occur. It’s easy to mentally offload the “thinking” part to the machine, leading to less critical evaluation of its output. This creates an “ownership vacuum” where no one feels fully accountable for the logic, making bug-fixing sessions tense and blame-oriented. Establishing a clear policy that “if you commit it, you own it” is essential to combat this trend.

Practical Strategies for Taming the Black Box

The goal isn’t to reject these powerful tools but to integrate them intelligently. Teams can mitigate the risks of AI generated code maintainability by establishing clear processes and best practices. The focus must shift from maximizing the quantity of generated code to ensuring the quality and comprehensibility of the final committed product.

1. Treat the AI as a Suggestible Pair Programmer, Not an Oracle

The most important mindset shift is to view the AI as a junior pair programmer—one that is incredibly fast and has seen a lot of code, but lacks context, judgment, and a sense of long-term consequences. The human developer must always be the senior partner in this relationship, responsible for guiding, questioning, and ultimately vetoing or refining the AI’s suggestions. The developer’s role is not to passively accept code, but to actively curate it.

2. Mandate Rigorous Refactoring and Annotation

A strict rule should be enforced: never commit raw AI-generated code. Any code snippet suggested by an AI must be treated as a rough first draft. The developer’s job is to:

- Refactor for Readability: Rename variables, break down large functions, and reformat the code to perfectly match the team’s established style guide.

- Add Explanatory Comments: The most crucial step is to add comments that explain the why. If the AI produced a complex one-liner, the developer must add a comment explaining the logic, the trade-offs, and the intent behind it. This act of documentation forces the developer to fully understand the code they are committing.

- Verify Dependencies: If the AI suggests a new library or dependency, the developer must vet it for security, maintenance status, and suitability for the project.

3. Enhance Code Reviews with an AI-Specific Checklist

Standard code reviews need to be adapted for the age of AI. In addition to normal checks, reviews for code involving AI contributions should ask specific questions:

- “Can the author clearly explain the logic of this function line by line?”

- “Have we considered edge cases that the AI might have missed (e.g., nulls, empty inputs, concurrency)?”

- “Does this code align with our long-term architectural goals, or is it a clever but tactical hack?”

- “Is this a ‘black box,’ or is it understandable by any developer on the team?”

4. Embrace Test-Driven Development (TDD)

TDD provides a powerful framework for safely incorporating AI-generated code. By writing comprehensive tests before generating the code, the developer defines a clear, unambiguous contract for what the code must do. They can then use the AI assistant to help generate an implementation that passes those tests. This process inherently forces the developer to think through requirements and edge cases upfront, using the AI as a tool to fulfill a well-defined specification rather than as a source of unspecified logic.

Frequently Asked Questions (FAQ)

Is AI-generated code always bad for maintainability?

Not inherently, but it presents unique risks that are not present in human-written code. For boilerplate, simple functions, or well-defined algorithms, it can be perfectly fine. The danger lies in using it for complex, business-critical logic without a rigorous process of understanding, refactoring, and testing. The problem isn’t the code itself, but the lack of human understanding behind it.

How can junior developers use AI tools responsibly?

Junior developers should be encouraged to use AI as a learning tool, not a crutch. After getting a suggestion, they should take the time to research why it works. They can ask it to explain the code, suggest alternatives, and then try to rewrite the code themselves from scratch. This turns a passive act of copying into an active learning exercise.

What’s the single most important rule for using AI code assistants?

You are the owner. The moment you commit a piece of code to the repository, you are fully responsible for its quality, correctness, and maintainability, regardless of whether you or an AI wrote the first draft. There is no “the AI did it” defense in a production outage.

Can’t static analysis and linting tools fix AI code quality issues?

These tools are essential, but they can only address surface-level issues like style inconsistencies or potential performance bugs. They cannot fix a fundamental flaw in logic, an incorrect choice of algorithm, or the complete absence of intent and readability. They are a safety net, not a solution to the core black box problem.

Conclusion: From Generation to Curation

AI coding assistants are undeniably powerful tools that are reshaping the landscape of software engineering AI. They can handle tedious tasks, accelerate development, and help developers explore new solutions. However, the excitement around this productivity boom often obscures the profound, long-term risks to code quality and maintainability. The “black box” nature of AI-generated code, with its lack of intent and potential for subtle errors, threatens to create fragile, incomprehensible systems that will be a nightmare to support in the future.

The solution is not to ban these tools, but to adopt a disciplined, human-centric approach to their use. By treating AI as a pair programmer, enforcing strict refactoring and documentation standards, and fostering a culture of deep ownership, we can harness its power without sacrificing the principles of good software engineering. The role of the developer is evolving from a pure creator of code to a sophisticated curator of human-AI collaboration.

At KleverOwl, we believe in building software that is not only powerful and efficient today but also robust and maintainable for years to come. If you’re looking to build mission-critical applications and want a partner who understands how to responsibly integrate AI into your development process, our experts are here to help. Explore our AI & Automation solutions or contact us today to discuss how we can build your next great project with quality and longevity in mind.