The Checkered Flag and the Cloud: How the Mercedes F1 Team Processes 1.1M Data Points a Second with Microsoft AI

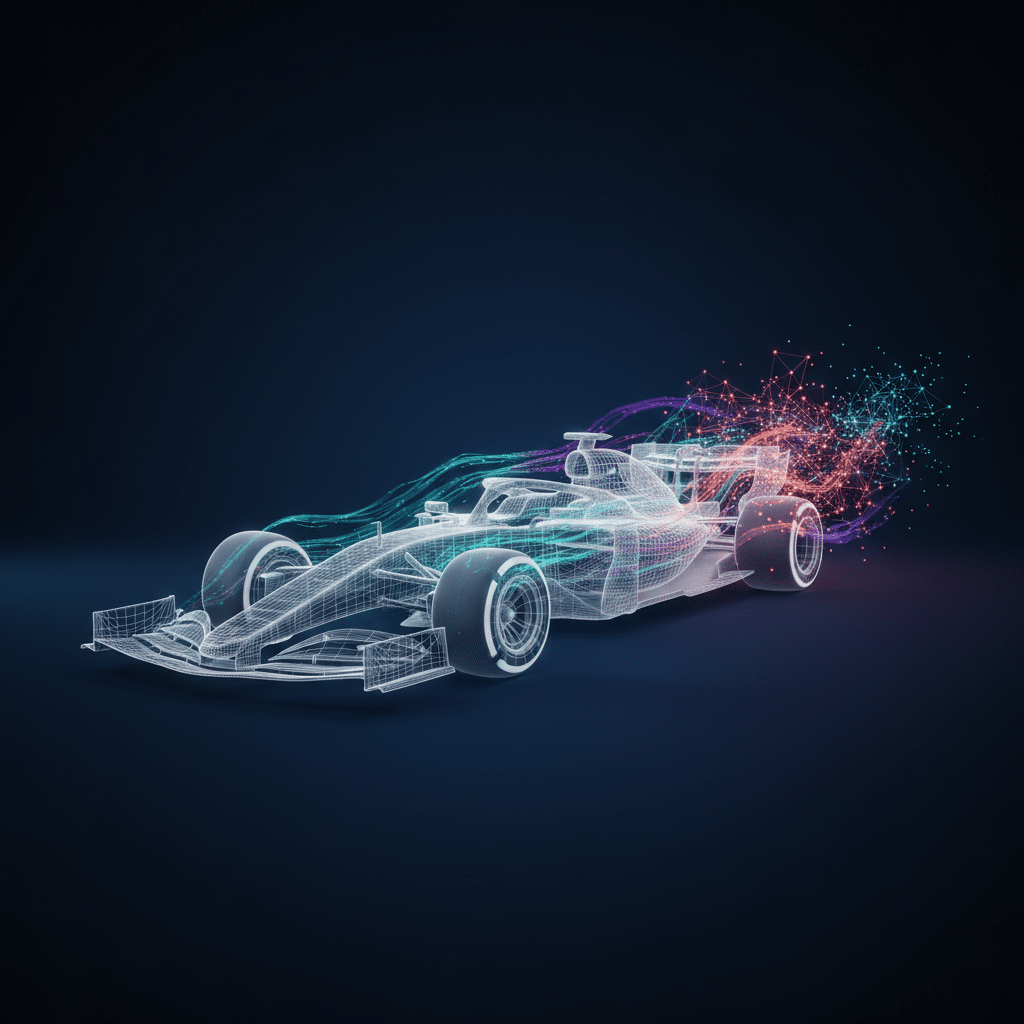

In the world of Formula 1, victory is measured in thousandths of a second. The deafening roar of the engines and the astonishing skill of the drivers are what capture our attention, but behind the scenes, an equally high-speed battle is being waged—a battle of data. The Mercedes-AMG Petronas F1 team, a dominant force in the sport, understands that modern racing is as much about silicon as it is about carbon fiber. This understanding is the foundation of the Microsoft AI F1 partnership, a collaboration that sees the team processing an incredible 1.1 million data points every single second. This isn’t just about collecting data; it’s about transforming a firehose of telemetry into actionable intelligence in real-time. This post explores the sophisticated Cloud & DevOps architecture and MLOps practices that empower Mercedes to make race-winning decisions, turning raw numbers into pure performance.

Anatomy of the F1 Data Tsunami

To appreciate the scale of the challenge, we first need to understand the source of this data deluge. An F1 car is a flying data center. It’s equipped with over 300 sensors that monitor every conceivable aspect of its performance and health. This constant stream of information is the lifeblood of the team’s strategy, both on and off the track.

From Tires to Telemetry

The data collected is incredibly diverse and granular. Key data streams include:

- Aerodynamics: Sensors measuring airflow and pressure across wings and bodywork to validate wind tunnel models and optimize downforce.

- Powertrain Performance: Data on engine RPM, temperature, fuel flow, and energy recovery system (ERS) deployment.

- Tire Dynamics: Critical information on tire pressure, core and surface temperatures, and wear rates, which directly influences pit stop strategy.

- Vehicle Dynamics: G-force measurements, suspension travel, and braking performance that help engineers and drivers fine-tune the car’s balance.

- Driver Inputs: Steering angle, throttle position, and brake application data provide insight into how the driver is interacting with the car.

During a typical race weekend, this torrent of information amounts to several terabytes of data. Processing this volume requires more than just powerful computers; it demands a robust and intelligent edge computing telemetry strategy combined with a massively scalable cloud backend.

From the Pit Wall to the Cloud: The Azure Data Pipeline

Getting 1.1 million data points per second from a car moving at over 200 mph to analysts back at the factory in Brackley, UK, is an immense engineering feat. The solution is a hybrid approach that combines on-site processing with the hyperscale power of Microsoft Azure. This forms the backbone of the team’s Formula 1 cloud strategy.

Edge Computing in the Garage

Not all data can wait for a round trip to the cloud. Decisions needed in milliseconds, like alerting an engineer to a critical pressure drop, require immediate analysis. This is where edge computing comes in. The team utilizes a “garage data center,” a portable high-performance computing cluster at the racetrack. Here, services conceptually similar to Azure Stack Hub or Azure IoT Edge perform initial data aggregation, filtering, and low-latency analysis. This ensures that the most time-sensitive insights are delivered directly to the engineers on the pit wall without delay.

High-Speed Ingestion into the Azure Cloud

Simultaneously, the filtered and raw data streams are securely and rapidly transmitted to the global Microsoft Azure cloud. This is achieved using Azure ExpressRoute, a dedicated, private, high-bandwidth connection that bypasses the public internet, ensuring both speed and security. Once the data hits the cloud boundary, services like Azure Event Hubs are used to ingest the massive, concurrent streams of telemetry data, queuing them up for large-scale processing. This infrastructure is the heart of their real-time data analytics cloud capability.

Processing and Storage at Scale

Inside Azure, the data is funneled into a sophisticated analytics pipeline. Azure Databricks, a powerful Apache Spark-based analytics platform, is used for complex data transformations and running machine learning models on live and historical data. The vast quantities of data, both structured sensor readings and unstructured data like race video, are stored in Azure Data Lake Storage, providing a cost-effective and highly scalable repository for training future AI models.

DevOps for High-Performance Computing: Engineering Resilience at 200 MPH

The world’s fastest data pipeline is useless if it’s not reliable. A system outage during a qualifying lap or a tight race could be disastrous. The Mercedes team applies rigorous DevOps principles to ensure their infrastructure is consistent, scalable, and resilient, which is a cornerstone of DevOps high-performance computing in such a demanding environment.

Infrastructure as Code (IaC) for Global Consistency

The F1 calendar spans the globe. The team needs to deploy identical, high-performance computing environments at every race, from Monaco to Melbourne. They use Infrastructure as Code (IaC) with tools like Azure Resource Manager (ARM) templates or Terraform. This allows them to define their entire cloud infrastructure—virtual machines, networks, storage, and services—in configuration files. With this approach, they can spin up a complete, race-ready environment in a new Azure region with predictable, repeatable results in a matter of hours, not days.

CI/CD for Rapid Innovation

The race for performance is relentless, and software is no exception. A new algorithm for predicting tire wear or a better data visualization tool for the strategists needs to be deployed quickly and safely. The team uses Continuous Integration/Continuous Deployment (CI/CD) pipelines, likely managed through Azure DevOps or GitHub Actions. Every code change is automatically built, tested, and deployed, enabling the team to iterate on their analytical tools throughout the race weekend, constantly seeking a competitive edge.

The MLOps Engine: Fueling Predictive Victory

Collecting and moving data is only half the story. The real magic happens when machine learning is applied to predict outcomes and prescribe actions. This requires a mature Machine Learning Operations (MLOps) practice to manage the end-to-end lifecycle of the models that power their race strategy. This is a premier example of AI chatbots and data intelligence for business.

Training and Retraining Models with Azure Machine Learning

Using Azure Machine Learning, data scientists at the factory build and train sophisticated models on terabytes of historical data. These models can predict everything from the optimal moment to pit, to how a change in wing angle will affect lap time, to the likely strategies of their competitors. The service provides a centralized workspace for managing data, experiments, and model versions, ensuring collaboration and reproducibility.

Real-Time Inference and Model Monitoring

During a race, these trained models are deployed to scalable compute targets like Azure Kubernetes Service (AKS). They receive live telemetry data and must return predictions, or “inferences,” in milliseconds. For example, a model constantly calculates the “pit stop window,” reassessing the optimal lap based on tire degradation, fuel load, and the position of other cars. Crucially, MLOps also involves continuous monitoring. A model trained on data from a hot, dry race in Bahrain might perform poorly in the cold, wet conditions of Spa. The system monitors for this “model drift” and can trigger alerts or even automatically retrain and redeploy a more suitable model.

From Data Points to Podium Points: The Real-World Impact

This immense technological framework isn’t just an academic exercise. It translates directly into on-track performance and strategic advantages that can be the difference between first and second place.

Simulation-Driven Car Design

Long before the cars hit the track, the team runs millions of simulations on Azure HPC (High-Performance Computing) clusters. They use computational fluid dynamics (CFD) and other models to test different car setups and aerodynamic packages. This data-driven approach, a key part of their Formula 1 cloud strategy, drastically reduces the need for costly physical testing and accelerates the car’s development cycle.

Precision Pit Stop Strategy

The decision of when to pit is one of the most critical in any F1 race. The AI models provide the strategists on the pit wall with precise probabilities. They can see the predicted outcome of pitting on lap 25 versus lap 26, factoring in traffic, tire performance drop-off, and the actions of rivals. This allows them to move beyond gut feelings and make decisions backed by millions of data points.

Proactive Reliability and Anomaly Detection

An unexpected component failure means a DNF (Did Not Finish) and zero points. Machine learning models continuously scan the sensor data for subtle anomalies that might precede a failure. A tiny, unusual vibration pattern or a slight increase in a specific component’s temperature could be an early warning sign, allowing the team to warn the driver or adjust their strategy to manage the issue and finish the race.

Frequently Asked Questions

What is the role of edge computing for the Mercedes-AMG Petronas F1 team?

Edge computing allows the team to perform initial, time-critical data processing right at the racetrack. For decisions that need to be made in fractions of a second, like alerting an engineer to a sudden tire pressure loss, sending data to the cloud and back is too slow. The trackside “garage data center” provides this instant analysis, ensuring the pit crew has the most immediate information available.

Why is MLOps so important in a high-stakes environment like Formula 1?

MLOps (Machine Learning Operations) is critical because it provides the framework to reliably build, deploy, and manage machine learning models at scale. In F1, models must be highly accurate, deployable in minutes, and constantly monitored for performance degradation as race conditions change. A robust MLOps practice ensures that the AI powering their strategy is trustworthy, agile, and always performing optimally.

Does all data processing happen in the Azure cloud?

No, it’s a hybrid model. The most immediate, low-latency processing happens at the edge (trackside). The larger, more complex analysis, large-scale model training, and long-term data storage happen in the Microsoft Azure cloud. This hybrid approach gives the team the best of both worlds: instant feedback at the track and the near-infinite scale and power of the cloud for deeper analysis.

How does the Microsoft AI F1 partnership benefit Mercedes beyond race strategy?

The partnership extends into engineering and manufacturing processes. The same data analytics and AI principles are used to optimize the design and manufacturing of the car’s components at the factory. By analyzing production data, they can improve efficiency, reduce waste, and increase the reliability of every part that goes into the car, contributing to overall performance.

Beyond the Checkered Flag: A Blueprint for High-Performance Business

The Microsoft AI F1 partnership with the Mercedes-AMG Petronas team is a powerful demonstration of how a sophisticated cloud and DevOps architecture can deliver a decisive competitive advantage. The principles they employ—unifying edge and cloud, embracing Infrastructure as Code, and operationalizing machine learning with MLOps—are not confined to the racetrack. Any organization looking to harness the power of real-time data, whether in finance, e-commerce, or logistics, can learn from this high-octane example.

The ultimate goal is to create a seamless, intelligent, and resilient system that turns information into insight and insight into action. Whether you’re chasing lap time or market share, the foundation for success is a world-class technology strategy.

If you’re ready to build a high-performance data and software strategy to accelerate your business, the experts at KleverOwl can help. Explore our AI & Automation solutions to unlock predictive insights, or check out our Web Development services to build the platforms that will power your growth. Contact us today for a consultation on how to build a secure, scalable, and winning technology stack.