Deconstructing the AI Agent: More Than Just a Smart Chatbot

The term “AI” has become synonymous with chatbots like ChatGPT, which excel at responding to prompts with human-like text. However, the next evolution is already here, and it’s centered on AI agents. These are not just passive responders; they are proactive, goal-oriented systems designed to perceive their environment, reason through complex problems, and take actions to achieve specific objectives. The fundamental shift is from instruction-following to problem-solving.

Think of it this way: you can ask a large language model (LLM) to write an email, and it will. An AI agent, however, can be given a higher-level goal like, “Organize a team meeting for next week to discuss the Q3 project launch.” The agent would then:

- Perceive: Access team members’ calendars via an API.

- Reason: Identify conflicting schedules and find a suitable time slot that works for everyone.

- Act: Draft the meeting invitation, book a conference room (or generate a video call link), and send the invites.

This capacity for autonomous action is what separates a tool from a true agent. An agent possesses a degree of autonomy, a set of tools (like API access or web browsing capabilities), and a memory to keep track of its progress. This distinction is crucial for understanding why managing them—a process known as LLM orchestration—is becoming one of the most important disciplines in modern software development.

The Orchestration Imperative: Why Single Agents Aren’t Enough

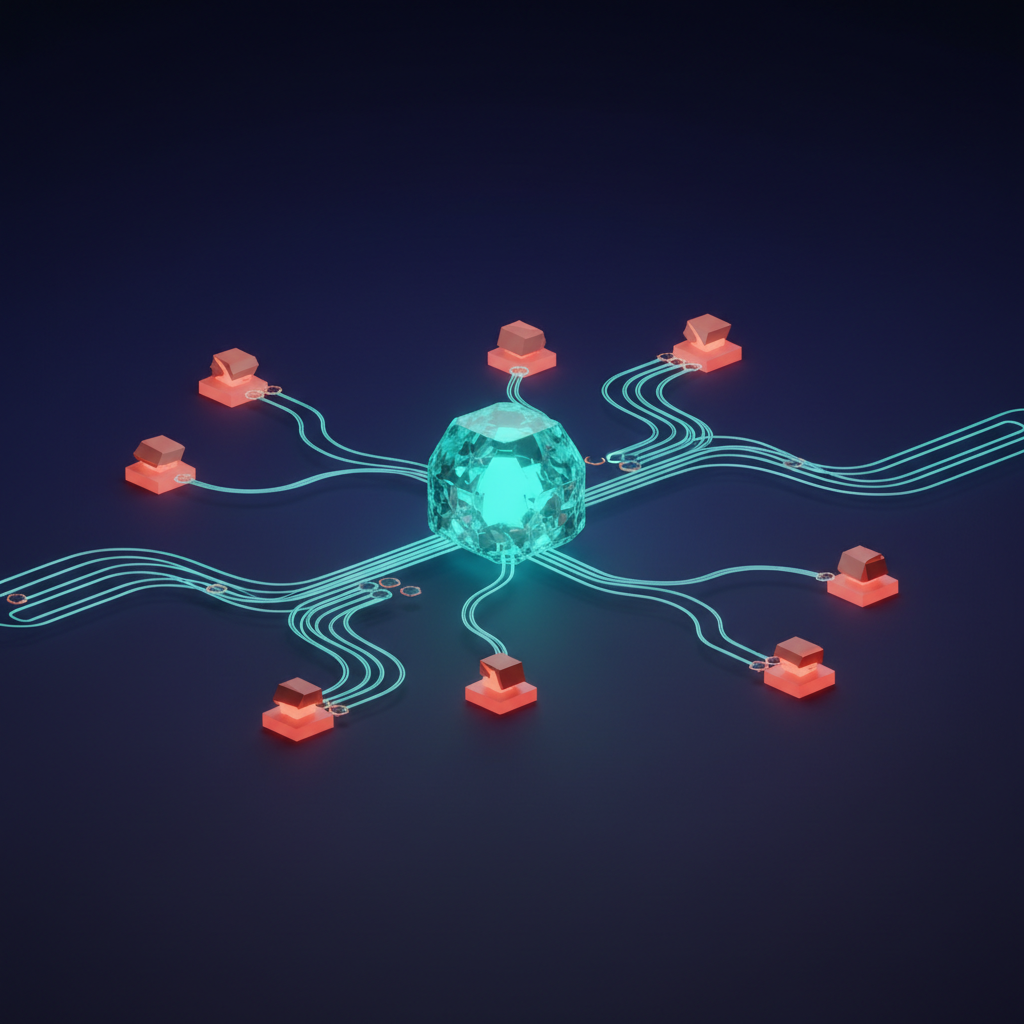

While a single, powerful AI agent can accomplish impressive tasks, most meaningful business processes are too complex for one entity to handle alone. Real-world challenges require a combination of specialized skills, access to different data sources, and a sequence of coordinated actions. This is where the concept of AI agent orchestration becomes essential.

Orchestration is the art and science of coordinating multiple specialized AI agents, tools, and data flows to accomplish a single, overarching goal. Imagine building a house. You don’t hire one “construction worker” to do everything. You hire a general contractor—the orchestrator—who manages a team of specialists: plumbers, electricians, carpenters, and painters. Each specialist (an agent) is an expert in their domain. The contractor ensures they work in the correct sequence and that their combined efforts result in a finished home.

Key Functions of an Orchestration Layer

A well-designed orchestration system is responsible for several critical functions:

- Task Decomposition: A central “manager” agent breaks down a high-level goal (e.g., “Launch a new marketing campaign”) into smaller, actionable sub-tasks (“Write ad copy,” “Generate images,” “Analyze target audience,” “Schedule social media posts”).

- Agent/Tool Selection: The system intelligently routes each sub-task to the most suitable agent or tool. A task requiring creative writing goes to an LLM fine-tuned for marketing copy, while a data analysis task might trigger a Python script agent.

- State Management: It maintains a persistent memory of the workflow’s progress. It knows which tasks are complete, which have failed, and what the results were, ensuring context is not lost between steps.

- Error Handling and Recovery: If one agent fails (e.g., an API call times out), the orchestrator can retry the task, delegate it to a different agent, or alert a human for intervention, preventing the entire workflow from collapsing.

Architectural Blueprints for AI Agent Orchestration

Designing systems where multiple AI agents collaborate effectively requires thoughtful architecture. There isn’t a one-size-fits-all solution; the right model depends on the complexity and nature of the task. Two dominant architectural patterns have emerged: hierarchical and collaborative.

Hierarchical vs. Collaborative Models

The hierarchical model, often called a “manager-worker” or “master-agent” architecture, is the most common. In this setup, a primary agent acts as a planner or dispatcher. It analyzes the main objective, creates a step-by-step plan, and assigns each step to a subordinate “worker” agent. This structure is excellent for predictable, linear workflows where the sequence of operations is well-defined. For example, processing an insurance claim could involve a sequence of agents: one to extract data from a form, another to check it against a policy database, and a third to draft a response.

The collaborative model, by contrast, functions more like a roundtable discussion. A group of peer agents communicate with each other to debate, critique, and refine a solution. Frameworks like Microsoft’s AutoGen popularize this approach, where agents can play different roles (e.g., Engineer, Critic, Product Manager) and converse to solve a problem dynamically. This model shines in complex, open-ended tasks like software development or scientific research, where the optimal path forward is not known in advance.

The Role of Frameworks like LangChain and AutoGen

Building these orchestration systems from scratch is a significant undertaking. Fortunately, open-source frameworks provide the essential “plumbing” to accelerate development. These advancements in AI and data intelligence are transforming how businesses operate.

- LangChain: This framework excels at “chaining” together LLM calls with various tools and data sources. It provides a rich library of components for creating agents that can interact with APIs, databases, and the file system. LangChain is particularly strong for building out the logic for individual agents and creating more structured, hierarchical workflows.

- AutoGen: This Microsoft Research project focuses specifically on simplifying the orchestration of multi-agent conversations. It provides a high-level abstraction for defining different agents with unique roles and system messages, allowing them to solve tasks collaboratively. Its strength lies in enabling dynamic, conversational problem-solving among agents.

The Next Frontier: The Agent Operating System

As we build increasingly complex systems with dozens or even hundreds of agents, a new layer of abstraction becomes necessary: the agent operating system (Agent OS). Just as a computer’s OS (like Windows or macOS) manages hardware resources (CPU, memory, storage) for various software applications, an Agent OS manages digital resources for a population of autonomous AI agents.

An Agent OS is not a product you can buy off the shelf today, but rather an architectural concept that pioneering teams are building towards. Its purpose is to provide a stable, secure, and efficient environment where agents can operate, communicate, and access tools without interfering with each other or causing systemic failures.

Core Components of an Agent OS

A mature agent operating system would likely include the following components:

- Resource Management: Centralized control over expensive resources like LLM API calls. It would handle API key management, enforce rate limits, and track costs to prevent runaway spending.

- Security and Sandboxing: A critical function to ensure safety. The OS would provide a secure “sandbox” where an agent can execute code or browse the web without having access to the host system’s sensitive files or network. This is vital for preventing malicious or buggy agents from causing damage.

- Inter-Agent Communication Bus: A standardized protocol for agents to send messages, share data, and delegate tasks to one another, much like applications use system-level APIs to communicate.

- Tool Registry: A managed library of verified and secure tools (APIs, functions, databases) that agents are permitted to use. This prevents agents from using unvetted or dangerous tools.

The development of a robust agent operating system is a key step toward making large-scale, autonomous AI systems practical and safe for enterprise use. It’s a complex challenge that combines principles of distributed systems, cybersecurity, and thoughtful UI/UX design for human oversight.

Pursuing True Autonomy: The Goals and Guardrails

The ultimate goal of this work is to create truly autonomous AI systems that can independently manage complex, long-running tasks with minimal human guidance. The potential benefits are enormous, from fully automated software development pipelines to self-managing supply chains. However, the path to achieving this is fraught with significant technical and ethical challenges that require careful consideration.

The Problem of Reliability and Hallucination

LLMs are probabilistic, not deterministic. They can “hallucinate” or generate factually incorrect information. While this is a minor annoyance in a chatbot, it’s a catastrophic failure in an autonomous agent tasked with executing a stock trade or deploying code to production. Ensuring agent reliability requires extensive validation, fact-checking mechanisms, and building systems that can recognize when their confidence is low and ask for human confirmation.

Security and Control: The Alignment Problem

Giving an AI agent access to internal systems, APIs, and databases introduces substantial security risks. The “alignment problem” is the challenge of ensuring an AI’s goals are aligned with human values and intentions. How do we build guardrails to prevent an agent from misinterpreting a command and, for example, deleting the wrong database? This is where “human-in-the-loop” (HITL) design becomes critical. For high-stakes actions, the agent should be required to seek approval from a human operator before proceeding. Our expertise in mobile app development and overall client trust stems from our commitment to security and robust solutions.

Cost and Latency

Sophisticated agentic workflows can be slow and expensive. A single high-level query might trigger dozens of underlying LLM calls, each adding to the final cost and response time. Optimizing these systems involves using smaller, faster models for simpler tasks, implementing intelligent caching strategies, and designing workflows that minimize unnecessary steps. Balancing performance with capability is a constant engineering trade-off.

Frequently Asked Questions about AI Agent Orchestration

- 1. What’s the difference between AI orchestration and traditional workflow automation?

- Traditional workflow automation (like Zapier or IFTTT) is based on rigid, predefined rules: “IF this happens, THEN do that.” AI orchestration is dynamic and intelligent. An orchestration system can understand a high-level goal, create a novel plan to achieve it, and adapt its plan if it encounters unexpected obstacles. It’s the difference between a flowchart and a project manager.

- 2. Can I build AI agents for my own business?

- Absolutely. Using frameworks like LangChain and CrewAI, development teams can create custom agents tailored to their specific business processes. However, it requires a strong understanding of LLMs, API integration, and system architecture. For businesses looking to implement these solutions, partnering with a specialist firm can provide the necessary expertise to build robust and scalable AI and automation solutions.

- 3. What are some real-world examples of AI agents today?

- We’re seeing them emerge in several fields. Cognition AI’s “Devin” is an example of an autonomous software engineering agent. Customer service platforms are using agents to not just answer questions but to access user accounts, process returns, and update orders. Internally, companies are building agents to automate financial reporting, conduct market research by browsing the web, and summarize long documents.

- 4. Is an “agent operating system” a real product I can use now?

- The Agent OS is still an emerging concept rather than a standardized product category like Windows or Linux. Companies like CrewAI and SuperAGI are building platforms that incorporate many Agent OS principles, such as tool management and multi-agent collaboration. For now, it’s best viewed as a forward-looking design pattern that guides the development of sophisticated multi-agent platforms.

From Instruction-Takers to Problem-Solvers: What’s Next?

The evolution from single-prompt language models to collaborative systems of AI agents marks a profound shift in how we build software and automate work. We are moving beyond creating tools that simply follow instructions and are beginning to build partners that can independently solve problems. The core technologies driving this change—intelligent LLM orchestration, robust architectural patterns, and the foundational concepts of an agent operating system—are no longer theoretical.

The journey toward truly autonomous AI will be gradual and filled with challenges related to safety, reliability, and cost. However, the potential to augment human capability and solve previously intractable problems is undeniable. By focusing on building modular, secure, and human-supervised agentic systems, we can begin to harness this power today.

Ready to explore how custom AI agents can streamline your operations and unlock new efficiencies for your business? The team at KleverOwl specializes in designing and building bespoke AI and automation solutions that deliver tangible results. Contact us today to start the conversation.