Beyond the IDE: What “Robust Developer Tooling” Really Means

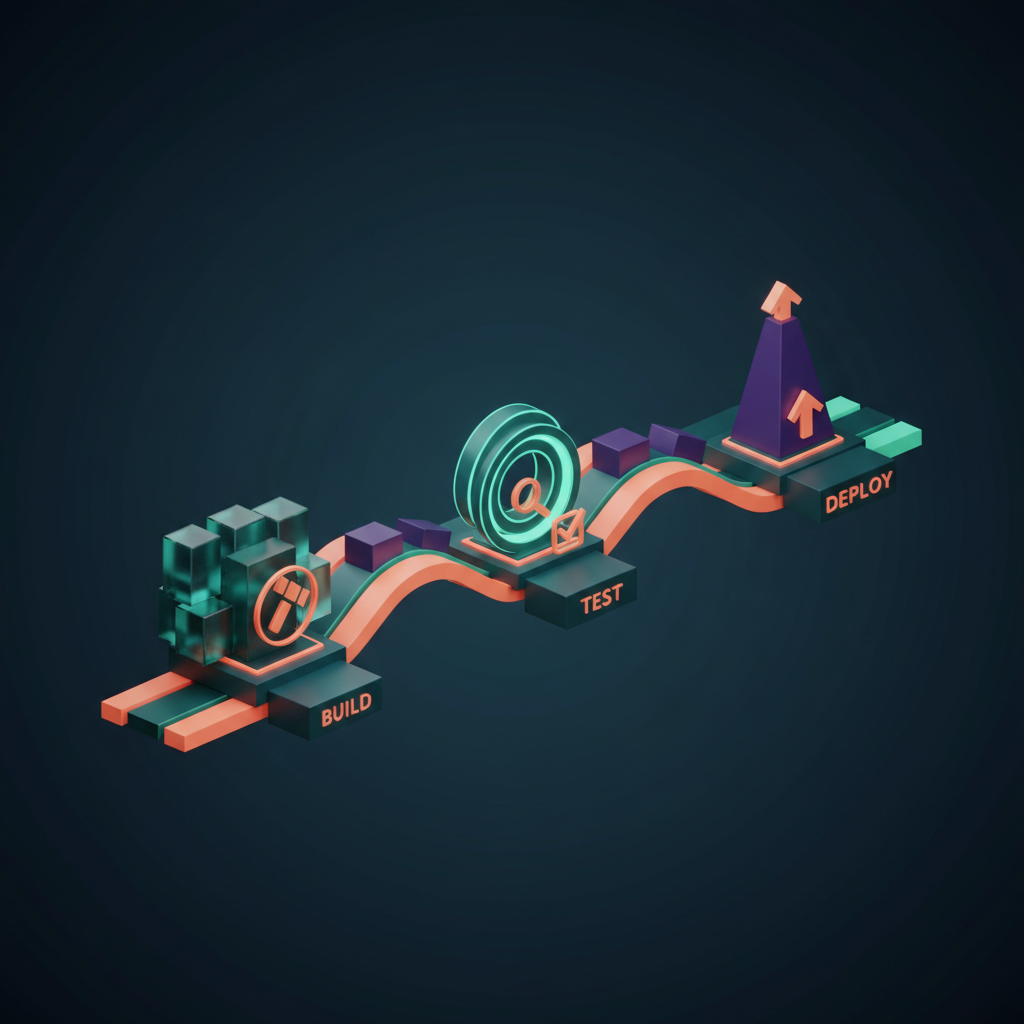

In modern software engineering, the quality of your code is only part of the equation for success. The other, equally critical part is the ecosystem of tools that supports your development lifecycle. A skilled artisan is limited by subpar instruments, and a development team is no different. We often talk about the importance of effective **DevOps tools**, but what transforms a random collection of software into a truly robust toolchain? It’s an integrated system designed for speed, quality, and insight, automating the mundane and illuminating the complex. This system is the silent partner in every successful project, enabling teams to build, test, and deploy with confidence and precision. It’s the difference between navigating with a compass and navigating with GPS—both get you there, but one does it with far greater efficiency and foresight.

What Constitutes a “Robust” Tooling Ecosystem?

A robust developer toolchain is more than just a subscription to the latest SaaS products. It’s a thoughtfully constructed, interconnected system that enhances every stage of the software development lifecycle (SDLC). The core principles of a robust system are integration, automation, and the creation of fast feedback loops.

Integration Over Isolation

Isolated tools create friction. A robust setup ensures that information flows seamlessly between different parts of the lifecycle. For example, your version control system should automatically trigger your CI/CD pipeline. The pipeline, in turn, should report build statuses back to your project management tool and communication platform (like Slack or Teams). When a deployment fails, the right people should be notified instantly with relevant logs from your monitoring system. This interconnectedness eliminates manual hand-offs and reduces the chance of human error.

Automation as a Default

Every repetitive, predictable task is a candidate for automation. This goes far beyond just compiling code. A strong toolchain automates:

- Testing: Unit, integration, and end-to-end tests run automatically on every commit.

- Code Analysis: Static analysis tools scan for bugs, vulnerabilities, and style violations without a developer needing to initiate it.

- Deployment: Pushing code to staging or production environments is a standardized, automated process, not a manual checklist.

- Infrastructure Provisioning: Tools like Terraform or Ansible allow you to define your infrastructure as code, making it repeatable and version-controlled.

Automation frees up developers to focus on what they do best: solving complex problems and creating value.

Fast, Actionable Feedback

The primary goal of this integrated, automated system is to provide rapid feedback. A developer should know within minutes—not hours or days—if their change broke the build, introduced a performance regression, or violated a security policy. The faster the feedback, the smaller the context switch and the cheaper the fix. This applies to performance as well; feedback from production monitoring should inform future development priorities.

The Foundation: Version Control and CI/CD Pipelines

At the very heart of any modern software project lies its version control system (VCS) and its Continuous Integration/Continuous Deployment (CI/CD) pipeline. These are the non-negotiable foundations upon which all other tooling is built.

Version Control: The Single Source of Truth

Git has become the de facto standard for version control, and for good reason. It provides a distributed, reliable history of every change made to the codebase. Platforms like GitHub, GitLab, and Bitbucket build upon Git by adding powerful features for collaboration, such as pull/merge requests, code reviews, and issue tracking. Your Git repository is the single source of truth for your application. Every deployment, every feature, and every bug fix starts here. A disciplined branching strategy (like GitFlow or a simpler trunk-based model) is the procedural glue that makes collaborative development on this foundation possible.

CI/CD: The Automated Assembly Line

If Git is the source of truth, the CI/CD pipeline is the automated factory that turns that truth into a running application. This is the core engine of the **DevOps tools** ecosystem.

- Continuous Integration (CI): The practice of frequently merging all developer working copies to a shared mainline. Each merge triggers an automated build and test sequence. The goal is to detect integration bugs early and often. Tools like Jenkins, GitLab CI, CircleCI, and GitHub Actions are leaders in this space.

- Continuous Deployment/Delivery (CD): This is the logical extension of CI. Continuous Delivery ensures that every change that passes the automated tests can be released to production with the click of a button. Continuous Deployment takes it a step further, automatically deploying every passed build to production without human intervention.

A well-configured pipeline provides the safety net that allows teams to move quickly without breaking things. It standardizes the path to production, making deployments predictable and stress-free.

Ensuring Quality: The Power of Code Compliance Tools

Writing functional code is just the first step. Writing clean, maintainable, and secure code is what ensures a project’s long-term health. This is where **code compliance** tools become indispensable. They act as an automated peer reviewer, enforcing standards and catching issues before they ever reach a human reviewer.

Static Application Security Testing (SAST)

SAST tools analyze source code to find security vulnerabilities without actually running the application. They can detect common issues like SQL injection, cross-site scripting (XSS), and insecure library usage. Integrating a SAST tool (like SonarQube, Snyk, or Checkmarx) directly into your CI pipeline means that security becomes a proactive part of the development process, not an afterthought.

Linters and Formatters

Consistency is key to readability and maintainability. Linters (like ESLint for JavaScript or Pylint for Python) analyze code for programmatic and stylistic errors. Formatters (like Prettier or Black) automatically reformat code to conform to a predefined style guide. By automating these checks, you eliminate entire categories of tedious code review comments and ensure the entire codebase has a uniform look and feel, making it easier for any developer to understand.

Code Quality Metrics

Tools like SonarQube go beyond simple style checks. They provide deeper metrics on code quality, such as cyclomatic complexity (how complex your functions are), code duplication, and test coverage. Setting quality gates in your CI pipeline—for example, failing a build if test coverage drops below 80%—enforces a high standard of quality across the entire team.

Beyond the Code: SQL Monitoring and Database Performance

An application is often only as fast as its database. A perfectly optimized frontend can be brought to its knees by a single inefficient database query. This makes **SQL monitoring** a critical, yet often overlooked, component of a robust tooling setup. You cannot optimize what you cannot measure, and databases can be complex black boxes without the right tools.

Identifying Performance Bottlenecks

SQL monitoring tools provide deep visibility into the performance of your database. They help you answer critical questions:

- Which queries are taking the longest to execute?

- Are our indexes being used effectively, or are we performing slow table scans?

- Are we experiencing deadlocks or high lock contention?

- Is our connection pool configured correctly, or is it a source of latency?

Key Tools and Metrics

Application Performance Monitoring (APM) solutions like Datadog, New Relic, and Dynatrace offer powerful SQL monitoring modules. They can trace a slow API request all the way down to the specific query that’s causing the delay. For more focused database monitoring, tools like pgAnalyze (for PostgreSQL) or SolarWinds Database Performance Analyzer provide even more granular detail. They present information through intuitive dashboards, showing query execution plans, index recommendations, and historical performance trends, allowing developers and DBAs to pinpoint and resolve issues proactively.

The Art of Troubleshooting: Advanced Debugging Tools

When bugs inevitably occur, especially in complex production environments, basic `console.log` statements are not enough. A mature toolchain includes a suite of advanced **debugging tools** that allow developers to quickly diagnose and resolve even the most elusive issues.

Distributed Tracing

In a microservices architecture, a single user request might travel through dozens of services. When something goes wrong, how do you know where the failure occurred? Distributed tracing tools (like Jaeger or Zipkin) solve this by assigning a unique ID to each request and propagating it across service boundaries. This allows you to visualize the entire lifecycle of a request, see the latency at each step, and immediately identify the service that is causing an error or delay.

Profilers and Memory Analyzers

Performance bugs can be harder to solve than functional bugs. A profiler is a tool that analyzes your application’s performance at a granular level, showing you which functions are consuming the most CPU time or memory. Memory analyzers help detect memory leaks, where an application fails to release memory it no longer needs, eventually leading to a crash. Tools like VisualVM for Java, Valgrind for C/C++, or the built-in profilers in Chrome DevTools for frontend applications are essential for performance tuning.

Remote Debugging

Sometimes, a bug only appears in a specific environment (like staging or even production). Remote debugging allows you to connect your local IDE’s debugger to a running process on a remote server. This lets you set breakpoints, inspect variables, and step through code as if it were running on your own machine, providing an incredibly powerful way to diagnose environment-specific issues without disrupting the service.

Frequently Asked Questions (FAQ)

- What’s the difference between monitoring and observability?

- Monitoring is about collecting predefined sets of metrics or logs to watch for known failure modes (e.g., “Is the CPU usage above 90%?”). Observability, on the other hand, is about having a system that is so well-instrumented that you can ask arbitrary questions about its state to debug unknown problems. It’s built on three pillars: logs (events), metrics (measurements), and traces (request flows). Monitoring tells you *that* something is wrong; observability helps you figure out *why*.

- How do DevOps tools improve team collaboration?

- They create a shared, transparent platform. Code reviews happen in a centralized place (GitHub/GitLab). Build and deployment statuses are visible to everyone in a shared channel (Slack/Teams). Dashboards from monitoring tools provide a common view of application health. This shared context reduces misunderstandings and ensures everyone is working with the same information.

- Can small teams benefit from robust developer tooling?

- Absolutely. In fact, small teams may benefit even more. Automation allows a small team to achieve the output of a much larger one by handling repetitive tasks. A solid CI/CD pipeline and good monitoring tools provide a safety net that allows the team to move fast and experiment without a large, dedicated operations team.

- What are some common pitfalls when implementing a new developer tool?

- The biggest pitfall is choosing a tool without a clear problem to solve, leading to “tool sprawl.” Another is failing to get team buy-in and provide adequate training, causing the tool to be underutilized or used incorrectly. Finally, teams often underestimate the effort required to properly integrate a tool into their existing workflow, leaving it as an isolated, less effective component.

- How does code compliance impact long-term project maintainability?

- It has a massive impact. Enforcing a consistent style makes the code easier for new developers to read and understand. Static analysis catches subtle bugs and “code smells” that would otherwise accumulate over time, creating technical debt. Security scanning prevents vulnerabilities from becoming deeply embedded in the application. In short, it keeps the codebase clean and manageable as it grows.

Your Toolchain as a Strategic Asset

Ultimately, a robust set of developer and **DevOps tools** is not a cost center; it’s a strategic investment in quality, speed, and developer happiness. It empowers teams to focus on creating business value by automating away toil, providing clear insights into application health, and catching errors at the earliest possible stage. Building such an ecosystem requires expertise and a holistic view of the development lifecycle, from the first line of code to monitoring in production.

If you’re looking to elevate your development practices, build a resilient CI/CD pipeline, or gain deeper insights into your application’s performance, the experts at KleverOwl can help. We specialize in creating tailored solutions that empower your team to build better software, faster. For instance, we can help you leverage AI solutions and automation to streamline your workflows.

Ready to build your strategic advantage? Explore our web development services or contact us for a UI/UX design consultation to fortify your development lifecycle.