AlpamayoR1: Is Causal Reasoning the Missing Link for Truly Autonomous Driving?

Imagine an autonomous vehicle navigating a quiet suburban street. Suddenly, a bright red ball bounces from between two parked cars and rolls into the road. A standard AI might register the ball as a low-threat, non-sentient object and continue on its path, only reacting when a child inevitably darts out after it. This split-second reaction time, however fast, might be too slow. This scenario highlights a fundamental gap in many current self-driving systems: the difference between seeing and understanding. The move towards genuine Causal Reasoning AI Autonomous Driving, embodied by new theoretical models like AlpamayoR1, is about closing that gap. It’s about building systems that don’t just perceive the world but comprehend the cause-and-effect relationships that govern it, leading to a new generation of safer, more predictable, and human-like autonomous agents.

Beyond Pattern Matching: The Shift from Correlation to Causation

For years, the engine of progress in autonomous driving has been deep learning, a branch of machine learning that excels at identifying patterns in vast datasets. These models, often based on convolutional neural networks (CNNs), are masters of correlation. They can be trained on millions of miles of driving data to associate a red octagonal sign with the action of braking, or the shape of a person with the need to yield. This is incredibly powerful but has a critical limitation: correlation isn’t causation.

A correlational model doesn’t understand why it stops at a stop sign; it just knows that in the training data, this image is overwhelmingly associated with this action. This works perfectly 99.9% of the time. The danger lies in the other 0.1%—the edge cases, the novel situations, the “long-tail” events that weren’t adequately represented in the training data.

The Problem with a Correlational Worldview

- Brittleness in Novel Situations: A correlational AI might be confused by a stop sign partially obscured by a tree branch, or one held by a construction worker in an unexpected location. It lacks the underlying concept of what a stop sign is and the authority it represents.

- Lack of Generalization: It struggles to apply learned knowledge to entirely new contexts. For example, understanding that a police officer directing traffic overrides a green light requires a deeper, rule-based comprehension of road hierarchy that goes beyond simple image-to-action mapping.

- The “Black Box” Dilemma: When a correlational model makes a mistake, it can be incredibly difficult to diagnose why. The decision-making process is buried in millions of weighted parameters, making it opaque. This lack of transparency is a major roadblock for regulatory approval and public trust.

The AlpamayoR1 Model Explained: A New Architecture for Understanding

AlpamayoR1 represents a conceptual leap forward by integrating a causal inference engine at its core. Instead of a single, monolithic neural network, its architecture is better understood as a multi-stage system designed for perception, reasoning, and action. This approach provides a clearer pathway to one of the most sought-after goals in the industry: explainable AI in autonomous vehicles.

Component 1: The Advanced Perception Layer

Like current systems, AlpamayoR1 begins with a sophisticated perception stack. It fuses data from LiDAR, radar, and cameras to build a high-fidelity, 3D model of its immediate environment. This is the foundation of its awareness. This layer is responsible for identifying and classifying objects: cars, pedestrians, cyclists, traffic lights, and road markings. This is the “what” of the driving scene.

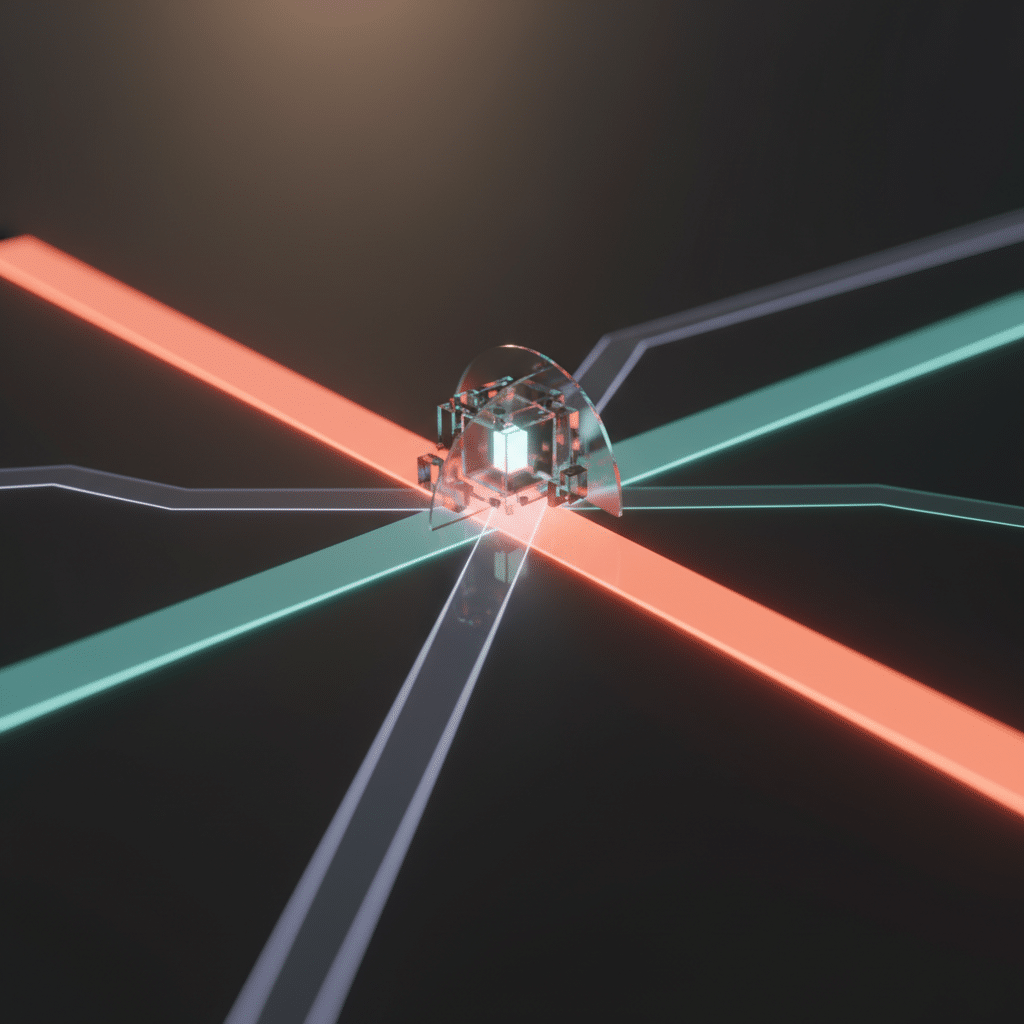

Component 2: The Causal Inference Engine

This is the model’s true innovation. The classified objects and their states (position, velocity, etc.) from the perception layer are fed into a Causal Inference Engine. This engine doesn’t just see a “ball” and a “pedestrian.” It operates on a pre-defined but dynamically updated structural causal model of the world—a sort of knowledge graph of how things interact.

- It understands that balls are often followed by children.

- It knows that brake lights on the car ahead cause it to decelerate.

- It infers that a person looking at their phone near a crosswalk has a lower awareness and is therefore a higher potential risk.

This engine allows the system to perform “counterfactual reasoning”—to ask “what if?” questions in real time. What if that car ahead brakes suddenly? What is the likely intention of the cyclist who just glanced over their shoulder? This is the “why” that has been missing.

Component 3: The Proactive Decision-Making Module

The output of the Causal Inference Engine informs the final decision-making module. Because the system has a richer, cause-and-effect understanding of the scene, its decisions become proactive rather than reactive. In the bouncing ball scenario, an AlpamayoR1-powered vehicle wouldn’t just see a ball. It would infer the high probability of an impending child, classify the situation as high-risk, and begin to slow down preemptively, long before the child becomes a direct obstacle. Its action is not just a reaction to a sensor input, but a reasoned response to a predicted outcome.

Enhancing Safety in the Unpredictable Real World

The primary benefit of a causal reasoning approach is a dramatic improvement in handling ambiguity and unpredictability. Most accidents happen in situations that are not straightforward. A causal model provides the context-aware intelligence needed to navigate these scenarios safely.

Consider a vehicle approaching an intersection where a driver in another car is waving them on, despite the traffic light being red for the autonomous car. A purely correlational system faces a conflict: the visual data (waving driver) contradicts the rule-based data (red light). An AlpamayoR1-style model can reason about the situation. It understands the concept of human communication and intent, weighs it against the baseline traffic laws, and can make a more nuanced decision—perhaps inching forward cautiously to confirm the other driver’s intent while remaining prepared to stop.

From Black Box to Glass Box: The Importance of Explainability

One of the biggest hurdles for the widespread adoption of AI for self-driving cars is the inability of current systems to explain their decisions. If an autonomous vehicle is involved in an accident, investigators, insurers, and regulators need to know why it made the choices it did. A black box model offers no answers.

A causal reasoning framework is inherently more transparent. The AlpamayoR1 model can externalize its decision-making process in a human-readable format. An incident log might read:

- “Detected object classified as ‘erratic vehicle’ (Event A).”

- “Inferred high probability of ‘impaired driver’ based on causal link between erratic swerving and driver status (Inference B).”

- “Increased following distance and moved to adjacent lane to minimize collision risk from unpredictable behavior (Action C).”

This level of explainability is not just useful for post-accident analysis; it’s essential for development, debugging, and building a foundation of trust with both the public and regulatory bodies.

Challenges on the Road to Causal AI

While the promise is immense, building and deploying models like AlpamayoR1 is not without significant challenges. This is not a technology that will be on the streets tomorrow.

The Data and Modeling Hurdle

Causal models require more than just observational data; they need interventional data to truly learn cause and effect. This means understanding what happens when the system actively intervenes in the world. Safely collecting this kind of data is a monumental task. Furthermore, constructing a causal graph that is comprehensive enough to represent the near-infinite complexities of real-world driving is a huge research and engineering effort.

Real-Time Computational Demands

Evaluating complex causal relationships and running counterfactual scenarios in the milliseconds required for safe driving is computationally intensive. It demands highly optimized software and powerful, specialized hardware that can perform these reasoning tasks without introducing dangerous latency.

The Future of Autonomous AI: A New Kind of Intelligence

Models like AlpamayoR1 signal a pivotal shift in the philosophy behind the future of autonomous AI. The industry is moving away from the idea of creating a perfect driver through brute-force data memorization and toward creating a system with a genuine, albeit simplified, understanding of the world.

This transition from pattern-matching perception to causal reasoning is the next logical step. It’s the difference between an apprentice who can mimic their master’s actions and a master who understands the principles behind them. By embedding the principles of cause and effect into their core logic, autonomous vehicles can become more robust, adaptable, and ultimately, far safer partners on our roads. The journey is complex, but the destination—a world of truly intelligent, trustworthy autonomous systems—is finally coming into view.

Frequently Asked Questions (FAQ)

Is AlpamayoR1 a real, commercially available model?

AlpamayoR1 as a specific product is a conceptual model representing the next frontier in AI research for autonomous systems. While leading AI labs at companies like Waymo, Cruise, and various research universities are actively working on integrating causal reasoning, a fully realized model named AlpamayoR1 is not yet a commercial product. It embodies the principles that are guiding the next wave of development.

How is causal reasoning different from reinforcement learning (RL) in self-driving cars?

Reinforcement learning teaches an AI agent to take actions in an environment to maximize a cumulative reward. While effective, an RL agent often learns a complex set of correlational policies without understanding the “why.” For instance, it learns that braking behind a stopped car yields a reward (avoids a penalty). Causal reasoning complements this by providing the underlying model of why the car ahead stopped (e.g., brake lights, traffic signal), allowing the AI to generalize its learned behavior to new situations more effectively.

Will a causal reasoning AI make cars overly cautious and slow?

Not necessarily. The goal is not just caution, but appropriate action based on a deeper understanding. A causal model could actually make a car more assertive and efficient in situations where it can confidently predict the behavior of other agents. For example, when merging onto a highway, it could better infer the intentions of other drivers to find a safe and decisive opening, rather than hesitating due to uncertainty.

What is the biggest barrier to implementing Causal Reasoning AI Autonomous Driving systems?

The two biggest barriers are data and complexity. First, building the comprehensive causal “world model” is an immense task that requires not just massive amounts of driving data, but a structured understanding of real-world physics and social interactions. Second, the computational overhead of running these complex reasoning processes in real-time on in-vehicle hardware is a significant engineering challenge that requires further advances in both software optimization and specialized chips.

Conclusion: Building the Future of Intelligent Automation

The journey toward fully autonomous vehicles is a marathon, not a sprint. While deep learning and machine learning for perception have brought us incredibly far, they have also revealed the limits of a purely correlational approach. Conceptual models like AlpamayoR1 illuminate the path forward, a path paved with causal reasoning, explainability, and a deeper, more robust form of artificial intelligence. It’s about teaching machines not just to see the road, but to understand it.

This evolution from simple pattern recognition to genuine comprehension is not just limited to cars. It’s the future of robotics, logistics, and intelligent automation. At KleverOwl, we believe in building systems that don’t just execute tasks, but understand them. If you’re looking to integrate next-generation intelligence into your operations, our experts are ready to help.

Ready to explore how advanced AI and automation can transform your business? Connect with our AI Solutions team today and let’s build a smarter future together.